Lab 9: Multiple Regression I

PSYC 6802 - Introduction to Psychology Statistics

Today’s Packages and Data 🤗

The GGally package (Schloerke et al., 2024) builds upon ggplot2 and includes many functions for creating complex plots. We will be using it to create scatterplot matrices.

As you may have guessed, this is my package 🫣 I use it both to save some functions that I find may be useful in the future. I’ll show some functions to create interactive 3D plots and APA style regression tables.

The flextable package (Gohel et al., 2024) allows to create highly customizable tables. The function that I use to create APA style Word tables makes heavy use of this package.

Predicting Country Happiness

In our data we have many variables that are likely related to happiness. I will just pick 2 of them for the purpose of this Lab. Let’s say that we want to evaluate how Freedom and Corruption predict Happiness. If we run two separate regressions, we find that both have reasonably high \(R^2\) values:

Call:

lm(formula = Happiness_score ~ Freedom, data = dat)

Residuals:

Min 1Q Median 3Q Max

-2.5273 -0.5317 0.1337 0.6656 1.7318

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 2.6234 0.3035 8.644 1.22e-14 ***

Freedom 4.6849 0.4732 9.901 < 2e-16 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 0.9065 on 138 degrees of freedom

Multiple R-squared: 0.4153, Adjusted R-squared: 0.4111

F-statistic: 98.03 on 1 and 138 DF, p-value: < 2.2e-16

Call:

lm(formula = Happiness_score ~ Corruption, data = dat)

Residuals:

Min 1Q Median 3Q Max

-3.5303 -0.7029 0.3538 0.7954 1.8762

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 9.1072 0.6077 14.99 < 2e-16 ***

Corruption -4.2279 0.7106 -5.95 2.1e-08 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 1.058 on 138 degrees of freedom

Multiple R-squared: 0.2041, Adjusted R-squared: 0.1984

F-statistic: 35.4 on 1 and 138 DF, p-value: 2.1e-08Well, That’s just the correlation 🤷

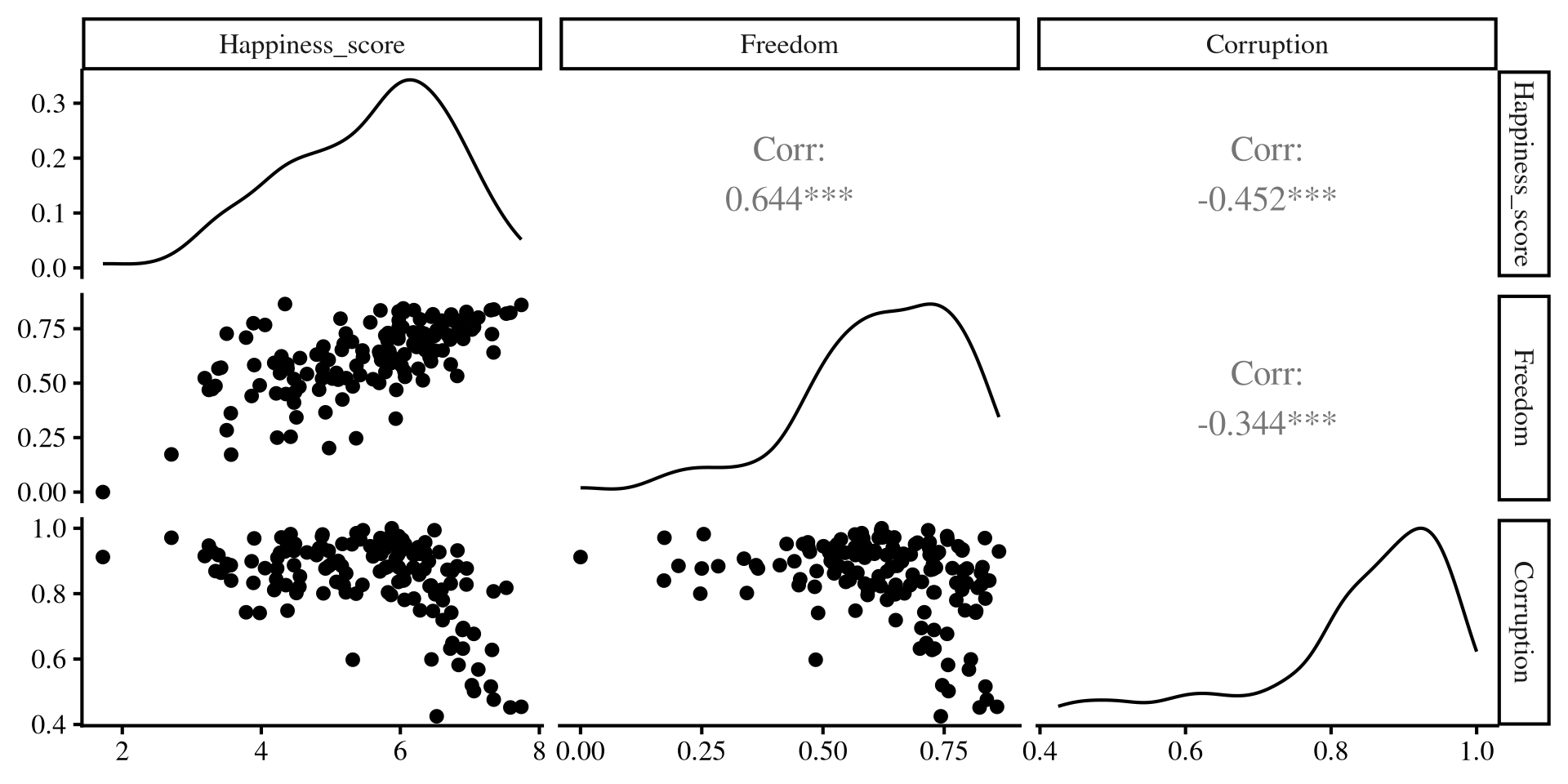

As we have learned, regression with one predictor is just a contrived way of calculating correlations among variables. We can better visualize the relation among out 3 variables with the ggpairs() function from GGally:

Separate regressions miss the points that our predictors share information!

Multiple Independent Variables

Call:

lm(formula = Happiness_score ~ Freedom + Corruption, data = dat)

Residuals:

Min 1Q Median 3Q Max

-2.3574 -0.4326 0.1503 0.5999 1.7335

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 5.0938 0.6875 7.409 1.18e-11 ***

Freedom 4.0320 0.4792 8.415 4.63e-14 ***

Corruption -2.4415 0.6168 -3.959 0.000121 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 0.8618 on 137 degrees of freedom

Multiple R-squared: 0.4753, Adjusted R-squared: 0.4677

F-statistic: 62.06 on 2 and 137 DF, p-value: < 2.2e-16We can include both predictors in our regression by adding variables in the lm() function like so lm(DV ~ IV1 + IV2 + ..., dat). for now I will note two things:

- Both slopes have changed and are not as large as they were in the individual regressions. The regression equations is now:

\[\mathrm{Happiness} = 5.09 + 4.03 \times \mathrm{Freedom} - 2.44 \times \mathrm{Corruption}\]

- The \(R^2\) is roughly \(.48\), which is lower than the sum of the \(R^2\) from the two individual regressions from before (\(.41 + .2 = . 61\)).

What is going on here? 🤔 a graphical illustration may provide some intuition!

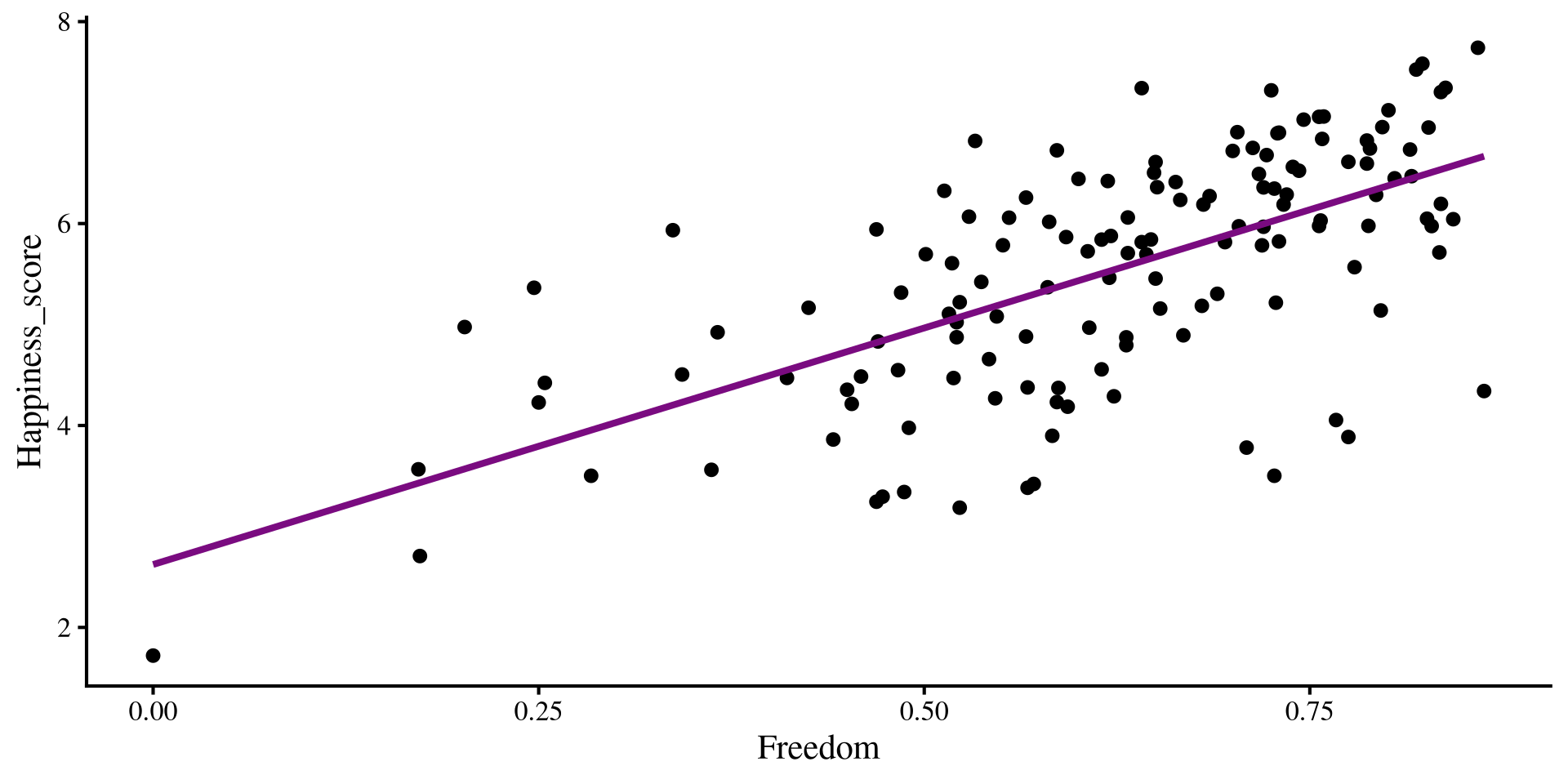

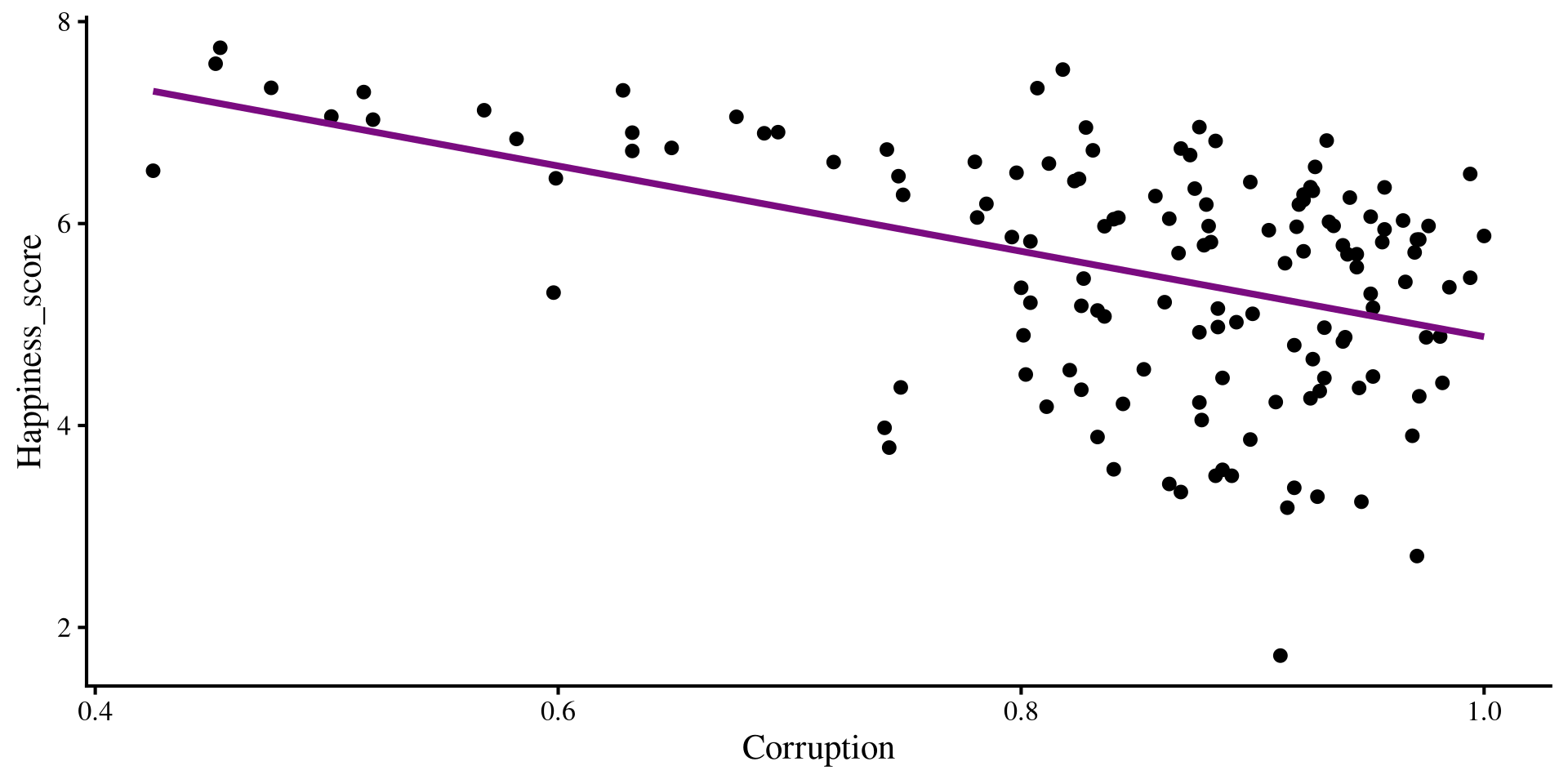

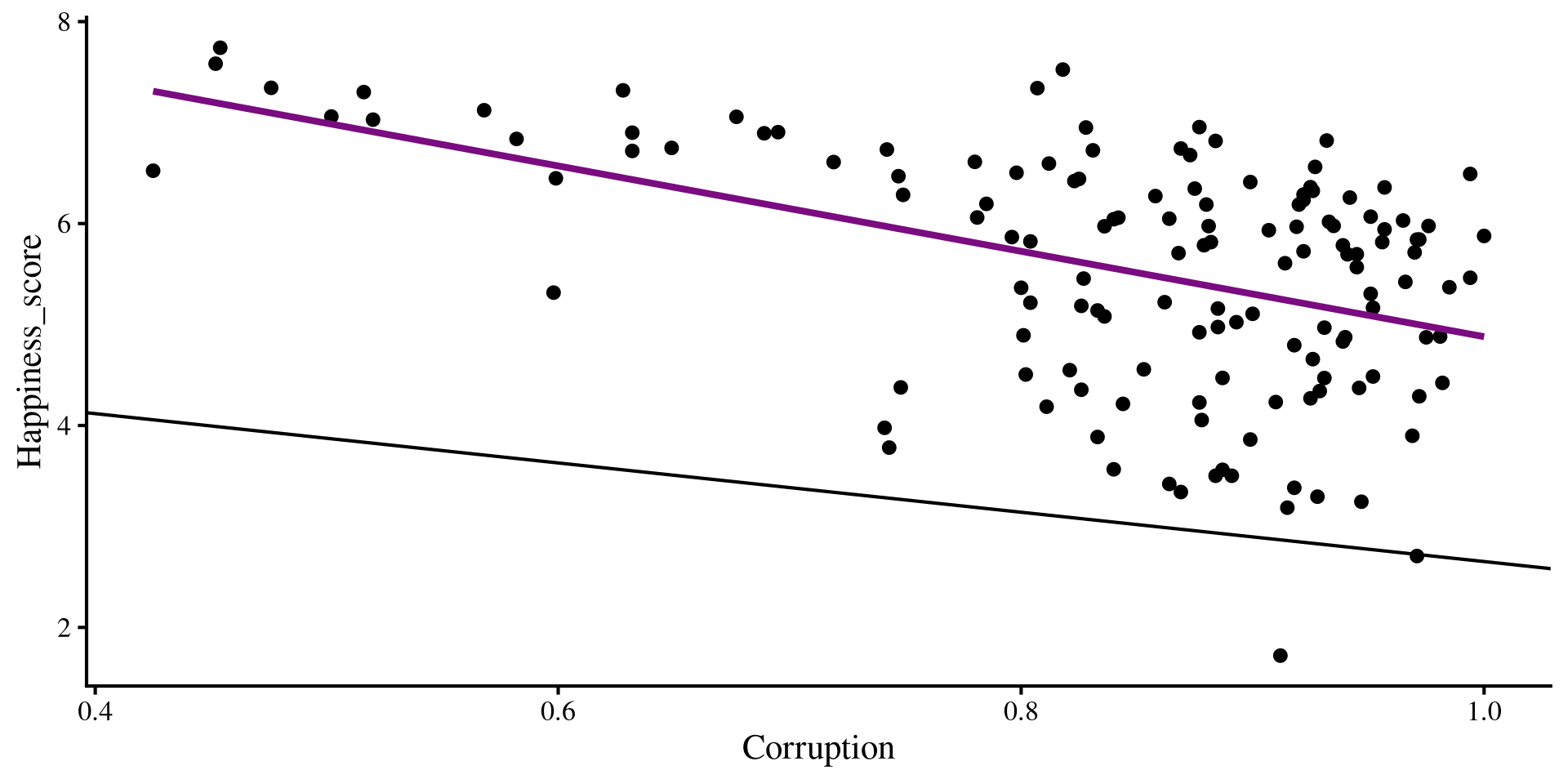

Individual regression plots

Below I plot the individual regression lines for Freedom and Corruption predicting Happiness.

Now, what happens if we try to overlay the regression lines that we got from our multiple regression? Will they “fit the data” better that the regression lines shown here?

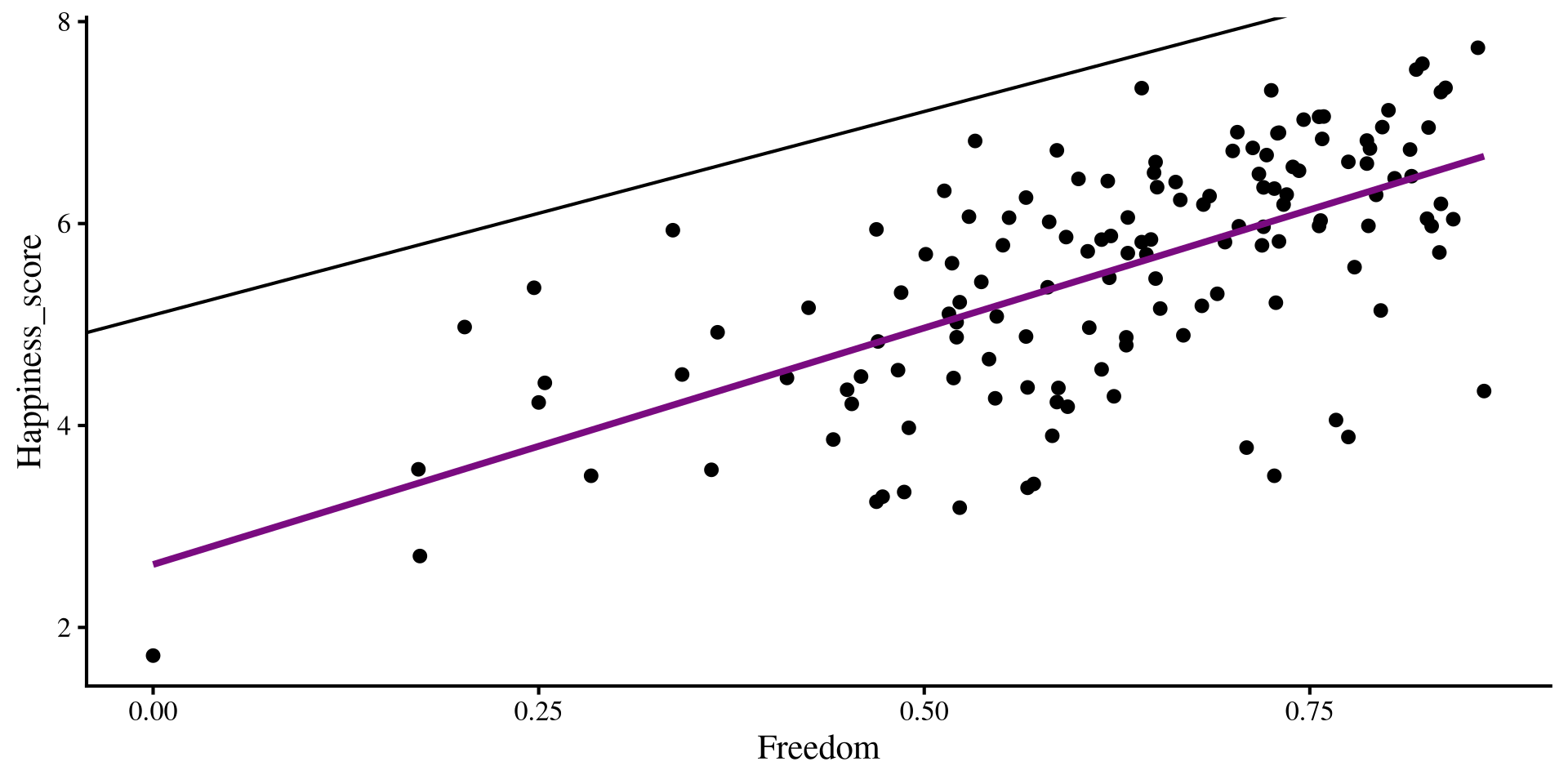

Adding Multiple regression Lines

if we add our multiple regression lines to the plot… (🥁 drum roll)

Wait, we are way off now 🤨 And here I thought multiple regression would be better 😑 …Unsurprisingly, the multiple regression is fine, our perspective is the problem!

A better Perspective

We have 3 variables, Freedom, Corruption, and Happiness, but we are looking at them in 2 dimensions with the individual scatterplots. We need a 3D scatterplot!

What is happening is that the scatterplots are only the sides of this box ‼️

Multiple regression works on this plot on the right, not the individual ones; multiple regression operates on a different dimension.

The Regression Plane

The results from multiple regression create a regression plane, which is the 3D version of a regression line.

The plane works exactly as the regression line. its surface is the best predition of happiness given a value of Freedom and Corruption. The residuals are now the distance from any of the dots to the plane!

Question: What would the plot look like with one extra variable?

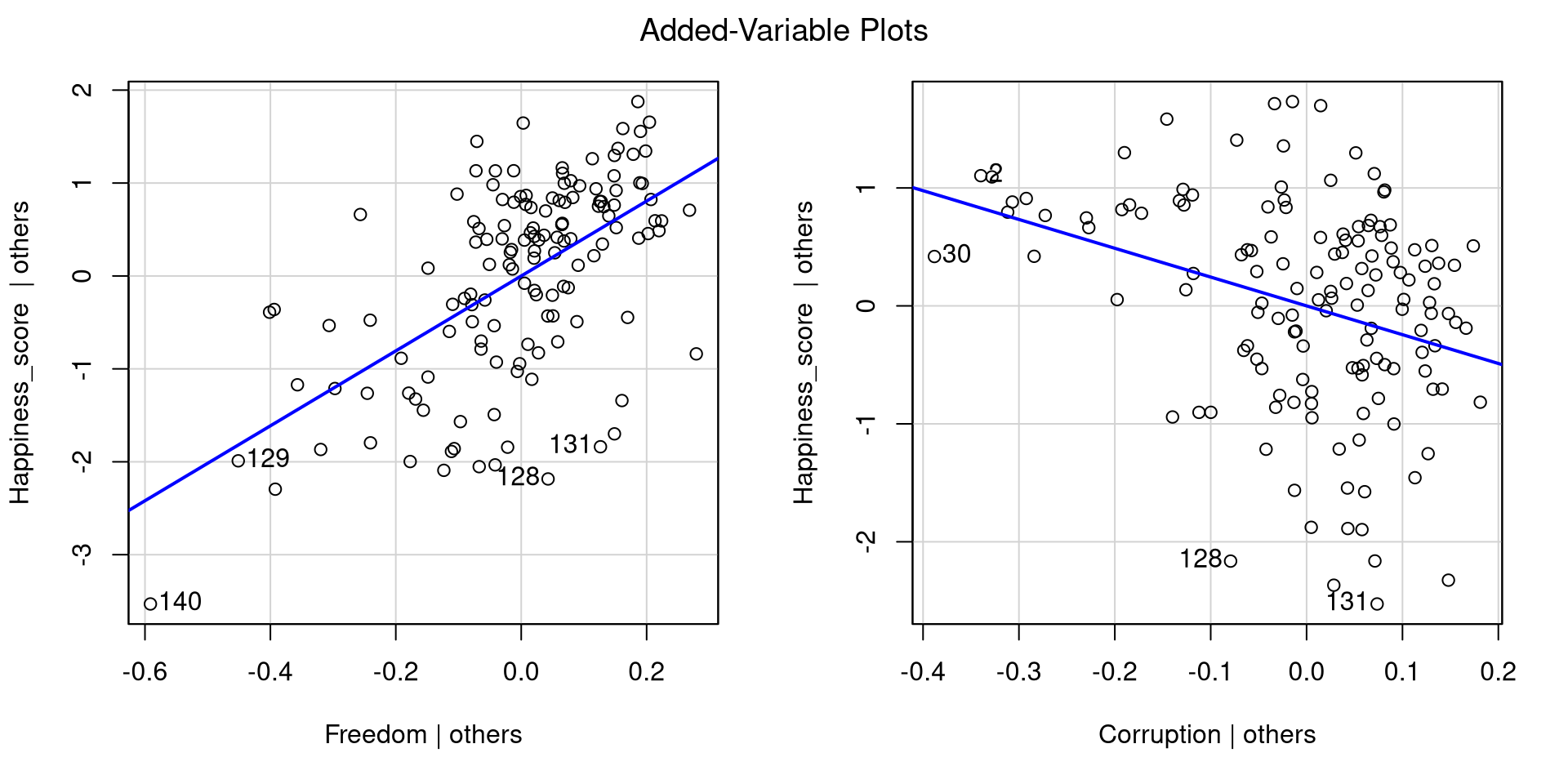

Added Variable Plots

If the 3D plot is a bit much for you, you can also visualize the results of multiple regression with added variable plots. To do that, we can pass out lm() object to the avPlots() function from car:

Essentially, added variable plots give you a visual representation of the relation between an IV and the DV after accounting for all other IVs.

Added variable plots have the advantage of being able to show multiple regression results when more than 2 IVs are involved. Why? Because with 3 IVs you would need a 4D plot, and humans can’t really do that 😔

Interpreting Multiple Regression

Call:

lm(formula = Happiness_score ~ Freedom + Corruption, data = dat)

Residuals:

Min 1Q Median 3Q Max

-2.3574 -0.4326 0.1503 0.5999 1.7335

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 5.0938 0.6875 7.409 1.18e-11 ***

Freedom 4.0320 0.4792 8.415 4.63e-14 ***

Corruption -2.4415 0.6168 -3.959 0.000121 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 0.8618 on 137 degrees of freedom

Multiple R-squared: 0.4753, Adjusted R-squared: 0.4677

F-statistic: 62.06 on 2 and 137 DF, p-value: < 2.2e-16Now that we have looked at our results graphically for a while, let’s interpret the numbers that we get.

intercept: the expected value of

Happinesswhen bothFreedomandCorruptionare at 0.Slope of

Freedom: the expected change inHappinessfor 1-unit increase inFreedom, controlling forCorruption.Slope of

corruption: the expected change inHappinessfor 1-unit increase inCorruption, controlling forFreedom.The \(R^2\) is the variance in

Happinessjointly explained byCorruptionandFreedom.

Note: Instead of “controlling for”, you may also hear “accounting for” or “holding constant”. They are all the same thing.

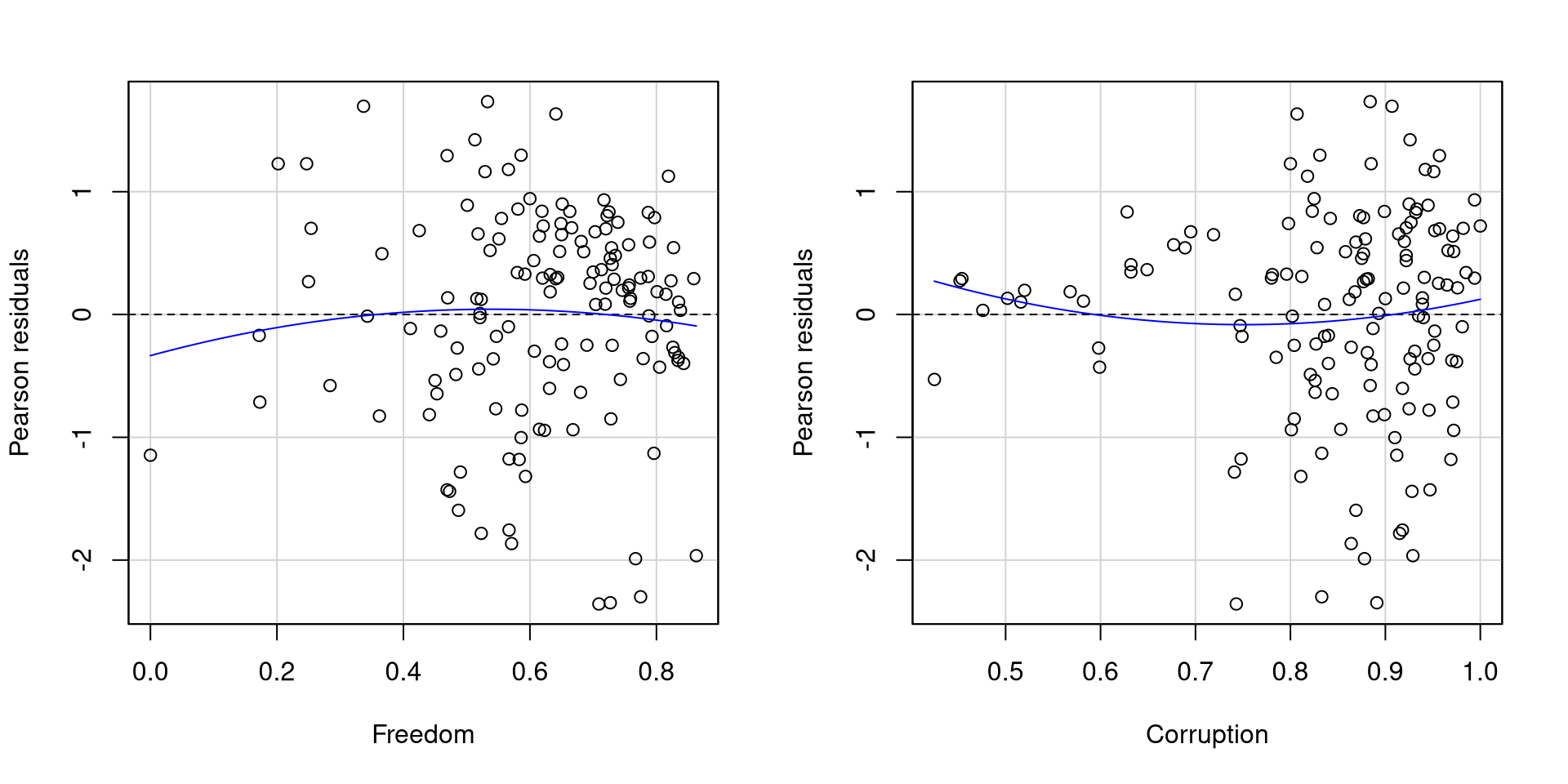

Checking Residuals

Because now we have multiple IVs, we need need to check the residuals against each of them. We can use the residualPlots() function from car to quickly get residual plots for all of our predictors:

As far as residuals go, I would say that these two plots are not too bad.

The residuals of Freedom probably look better than those of Corruption mostly because Corruption has more points clustered at higher values and few points at lowe values.

The Scale of Our Variables and Interpretation

Our regression intercept and slope are:

Because of the way Freddom and Happiness are measures, a “1-unit” increase is already out of range, making the value of the slopes not very representative of the data.

Once again, standardizing our variables can help us produce more interpretable results:

And now all the variables are on the same measurement unit. As a reminder, a value of 0 now represents the mean of the variable, and a value of -1/+1 represents a value 1 SD below/above the mean.

Standardized Multiple Regression

Call:

lm(formula = Happiness_std ~ Freedom_std + Corruption_std, data = dat)

Residuals:

Min 1Q Median 3Q Max

-1.9957 -0.3663 0.1272 0.5079 1.4675

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 1.305e-16 6.166e-02 0.000 1.000000

Freedom_std 5.546e-01 6.591e-02 8.415 4.63e-14 ***

Corruption_std -2.609e-01 6.591e-02 -3.959 0.000121 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 0.7296 on 137 degrees of freedom

Multiple R-squared: 0.4753, Adjusted R-squared: 0.4677

F-statistic: 62.06 on 2 and 137 DF, p-value: < 2.2e-16Now that the variables have been standardized, when can interpret the slopes as follows:

When controlling for

Corruption, 1 SD increase inFreedomwill result in a \(0.55\) SD units increase inHappiness.When controlling for

Freedom, 1 SD increase inCorruptionwill result in a \(0.26\) SD units decrease inHappiness.

In general, I believe you should always report standardized regression results, because your readers always know the scale of all your variables and how to interpret regression coefficients.

APA Style Table for Regression Results

because I hate creating tables manually, at some point I made function that does that for me ✨ Also, creating big tables by hand is generally not a good idea: you waste time and likely make typos.

the regression_flextable() function I made takes in an lm() object and has some optional arguments (see help page). Here I rename the regression coefficients with the var_names = argument.

You can save the table as a .docx file (Word document) by using the save_as_docx() function from the flextable() package. You specify the file name in the path = argument.

Categorical Variables

So far, we have only seen categorical IVs in ANOVA. Because ANOVA is a regression, we can indeed use categorical predictors in a regression. The catch is that we need to trick the regression into thinking that the categorical predictors are numeric variables 🧐

Idea: we think that if we use the mean of Happiness of each of these regions, we will improve our predictions! (this should sound familiar)

Now, we need to somehow express the Region variable in some numeric fashion, such that the regression switches to using the mean of Happiness by Region when it’s predicting Happiness of a country from that region 💡

There actually are many ways of doing this, but we will make use of the fact that regression slopes are the “change in Y per 1-unit change in X”. We will focus on the trick known as dummy coding!

Dummy Coding With Factor Variables

Factor variables have the advantage of automatically doing dummy coding for you! I will filter for just a few regions to make the example a bit easier to follow:

Let’s turn Region into a factor variable and investigate first:

If we look at each row, you can see each variable is assigned a vector of \(0\)s and \(1\)s. Crucially, each vector uniquely identifies the region (e.g., 0, 0, 0 corresponds to Africa)

If we look at the columns, we can see that a \(1\) appears on when the column name corresponds to the row name. Also, there is no column for Africa because we don’t need it, just leaving 0,0,0 will automatically represent Africa.

What does this mean for a regression?

Regression With a Categorical Variable

Call:

lm(formula = Happiness_score ~ Region, data = dat_filt)

Residuals:

Min 1Q Median 3Q Max

-1.77776 -0.47449 0.07755 0.55595 1.46692

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 4.3991 0.1133 38.837 < 2e-16 ***

RegionAsia 1.0857 0.1902 5.710 1.12e-07 ***

RegionEastern Europe 1.5537 0.1875 8.288 4.84e-13 ***

RegionWestern Europe 2.3537 0.1931 12.192 < 2e-16 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 0.7164 on 102 degrees of freedom

Multiple R-squared: 0.6205, Adjusted R-squared: 0.6094

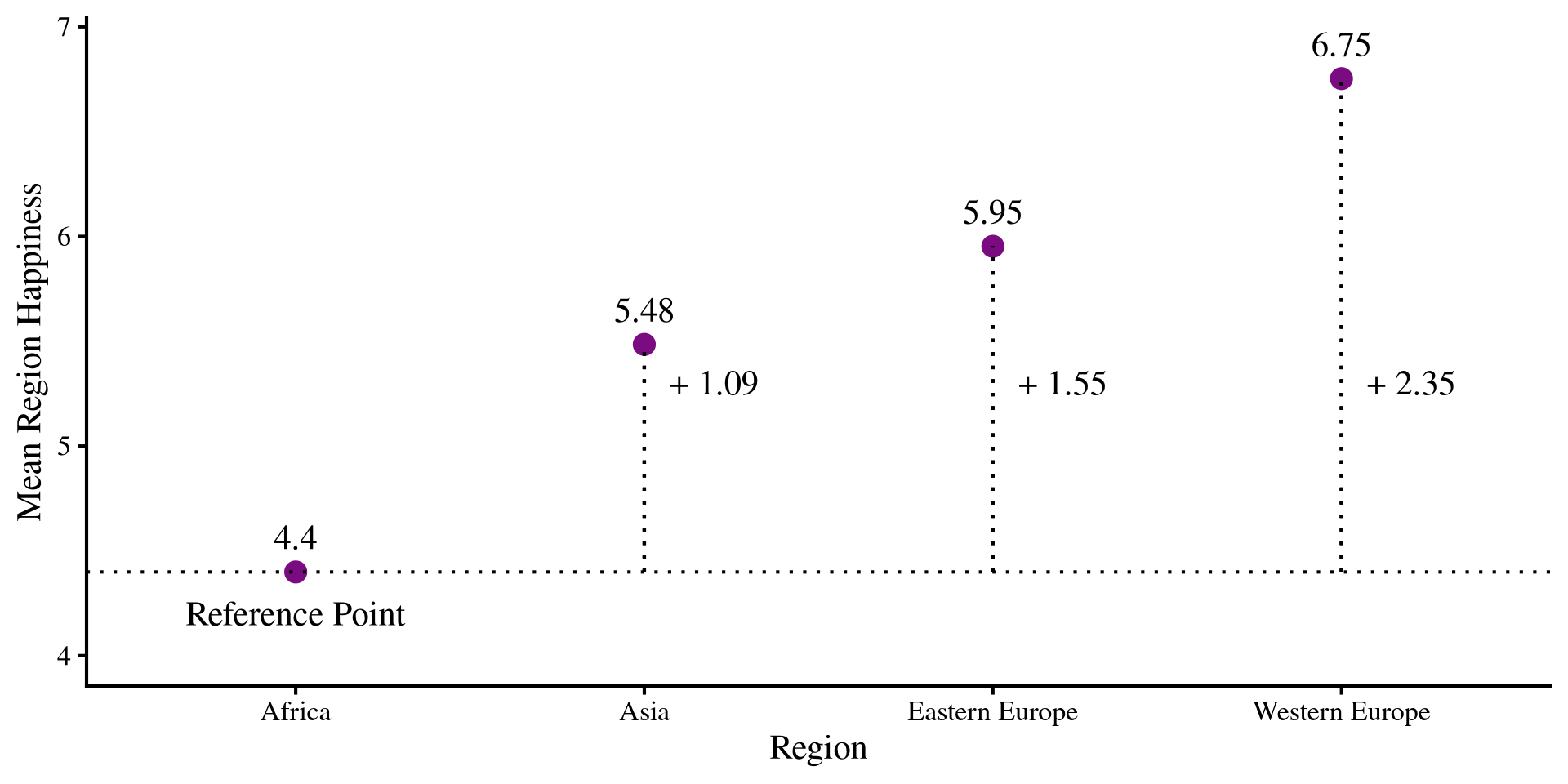

F-statistic: 55.6 on 3 and 102 DF, p-value: < 2.2e-16We get 3 “slopes” each corresponding to the regions that did not have all 0s. Let’s look at the mean Happiness for each region:

You should see that the intercept is the mean Happiness for Africa, while the “slopes” are the differences from each of the other regions happiness from that of Africa!

The Graphical intuition

What is happening is that we have a reference group, which is the one coded a all 0s, Africa in our case. All other slopes will be the mean differences from the reference group.

the intercept and the slopes are:

Coefficient Value

1 Intercept 4.40

2 Asia 1.09

3 Eastern Europe 1.55

4 Western Europe 2.35The slopes represent the change in mean Happiness for each region from the reference group!

Plot Code

happ_means <- dat_filt %>%

group_by(Region) %>%

summarise(Happ_mean = mean(Happiness_score))

# reference mean

ref <- as.numeric(happ_means[1,2])

ggplot(happ_means, aes(x = Region, y = Happ_mean)) +

geom_point(size = 4, color = "#7a0b80") +

geom_text(aes(label = round(Happ_mean, 2)), vjust = -1) +

geom_hline(yintercept = ref, lty = 3) +

geom_segment(x = "Asia",

xend = "Asia",

y = ref,

yend = as.numeric(happ_means[2,2]), lty = 3) +

geom_segment(x = "Eastern Europe",

xend = "Eastern Europe",

y = ref,

yend = as.numeric(happ_means[3,2]), lty = 3) +

geom_segment(x = "Western Europe",

xend = "Western Europe",

y = ref,

yend = as.numeric(happ_means[4,2]), lty = 3) +

annotate("text", x = 1, y = 4.2, label = "Reference Point") +

annotate("text", x = 2.2, y = 5.3, label = paste("+", round(coef(reg_cat)[2], 2))) +

annotate("text", x = 3.2, y = 5.3, label = paste("+", round(coef(reg_cat)[3], 2))) +

annotate("text", x = 4.2, y = 5.3, label = paste("+", round(coef(reg_cat)[4], 2))) +

ylim(c(4, 6.9)) +

ylab("Mean Region Happiness")

Why Does this work?

I think it is worthwhile to dive a bit more into why dummy coding works. The regression from two slides ago implied the following regression equation:

\[\mathrm{Happiness} = 4.40 + 1.09 \times \mathrm{Asia} + 1.55 \times \mathrm{Eastern Europe} + 2.35 \times \mathrm{Western Europe}\]

if you recall, the intercept is “the expected value of Y when all other variables are 0”. If we apply it to this equation we get \(4.40\), the mean happiness of Africa. This is the case because Africa is represented by 0,0,0.

Similarly, we know that Western Europe is represented as 0, 0, 1. So, to get the mean Happiness of Western Europe, we run:

(Intercept)

6.752762 For “1-unit increase” in Western Europe, the mean of Happiness goes from the reference of \(4.40\) to \(6.75\).

The Moral of the Story

So, dummy coding is one way of tricking regression into treating categorical variables as continuous. Dummy ccoding is a type of contrast , and as we have seen in Lab 7, the numbers that we choose for contrasts can be whatever we like as long as they proportionally represent the comparison we are interested in.

For example, nothing prevents me from changing the \(1s\) in the dummy coding to \(3s\). The significance tests will be equivalent:

However, this is impractical! Our coefficients are now interpreted as \(\frac{1}{3}\) the mean difference comepared to the reference group. No one would want that 🤷

Call:

lm(formula = Happiness_score ~ Region, data = dat_filt)

Residuals:

Min 1Q Median 3Q Max

-1.77776 -0.47449 0.07755 0.55595 1.46692

Coefficients:

Estimate Std. Error t value Pr(>|t|)

(Intercept) 4.39908 0.11327 38.837 < 2e-16 ***

RegionAsia 0.36191 0.06338 5.710 1.12e-07 ***

RegionEastern Europe 0.51789 0.06249 8.288 4.84e-13 ***

RegionWestern Europe 0.78456 0.06435 12.192 < 2e-16 ***

---

Signif. codes: 0 '***' 0.001 '**' 0.01 '*' 0.05 '.' 0.1 ' ' 1

Residual standard error: 0.7164 on 102 degrees of freedom

Multiple R-squared: 0.6205, Adjusted R-squared: 0.6094

F-statistic: 55.6 on 3 and 102 DF, p-value: < 2.2e-16References

PSYC 6802 - Lab 9: Multiple Regression I