Lab 5: Power

PSYC 6802 - Introduction to Psychology Statistics

Today’s Packages and Data 🤗

The pwr package (Champely et al., 2020) includes functions to conduct power analysis for some statistical analyses and experimental designs. Click here for examples on all the types of power calculations that the package allows for.

What is Power⚡?

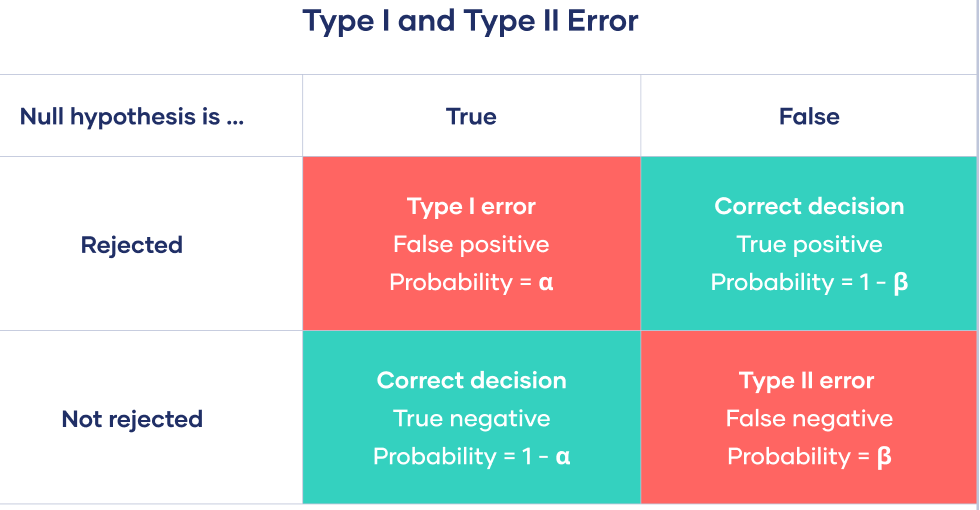

Power: Statistical power is defined as the probability of correctly rejecting the null hypothesis if \(H_0\) is false. Power is also known as 1 - Type II error rate. Type II error rate is denoted with \(\beta\), and is the probability of failing to reject a false \(H_0\).

The Logic of the pwr Functions

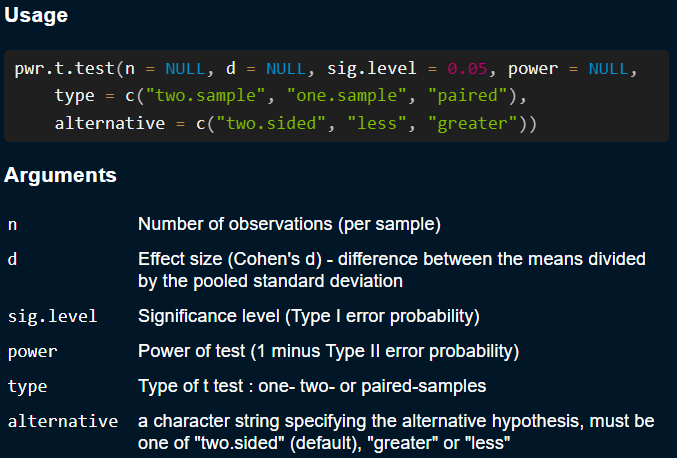

All of the functions in the pwr package work in very similar fashion. They will generally include arguments representing these 4 factors that influence power:

- Effect size: could be \(d\) in the case of a \(t\)-test, \(W\) for a \(\chi^2\) test, \(R^2\) for regression…

-

Sample size: How many participants you expect to have/will need. Will usually be the

n =argument. -

Type I error rate: This is the desired \(\alpha\) level. This is always the

sig.level =argument. -

Power: The desired power. This is always the

power =argument.

pwr functions will expect to leave one of these 4 arguments empty (= NULL), and will return its value

pwr.t.test() FunctionThese arguments may be named differently depending on the type of power analysis, so always check the function help menu when trying to use any of the pwr package functions.

Power For χ2 Tests

Let’s say the we want to run a study where we want to evaluate the effecicacy of a new cancer treatment versus an old one by checking whether there is an association between type of treatment and cancer remission.

We want to find out the necessary sample size (\(N\)) required to:

- Achieve \(.8\) power,

- At \(\alpha = .05\),

- given that we expect an effect of \(W = .5\).

Furthermore, we know that \(df = 1\) because we would have a \(2 \times 2\) table (new/old treatment VS remission/ no remission).

Retrospective Power Analysis

Sometimes it is insightful to check how much power a study had given the observed effect size. If you remember the airplane delays example from Lab 3, We got the following results:

- At \(\alpha = .05\),

- with \(N = 106596\) observations,

- we got an effect of roughly \(W = .06\),

- And we had \(df = 1\).

How much power did we have? (probability to reject \(H_0\))

We had power \(= 1\). This comes as no surpise given the huge sample size, which is by far the most impactful thing on power.

This means that given a large enough sample size, anything becomes significant 🤷

Power for One Sample \(t\)-tests

In Lab 4 we ran a one sample t-test to test whether average restaurant ratings were significantly different from 3. with \(d\) = .21 and \(N\) = 200 we can do a retrospective power calculation:

So, given the observed results, we had \(84\%\) power.

Alternatively, let’s say that we want \(95 \%\) power under the same conditions; how large of a sample size do we need? Then, ⇒ ⇒

We would need \(N = 297\) participants.

Power for Independent Sample \(t\)-tests

In Lab 4, we tested whether males and females were significantly different on average food rating.

Welch Two Sample t-test

data: Overall_Rating by Gender

t = 1.1696, df = 154.21, p-value = 0.244

alternative hypothesis: true difference in means between group Female and group Male is not equal to 0

95 percent confidence interval:

-0.1288966 0.5030181

sample estimates:

mean in group Female mean in group Male

3.335366 3.148305 How much power did we have?

t test power calculation

n1 = 82

n2 = 117

d = 0.15

sig.level = 0.05

power = 0.1792332

alternative = two.sidedOnly \(18\%\) power. It means that it was fairly unlikely for us to reject \(H_0\) given the observed effect size and sample size.

Low Power is bad Because…

Low power is not bad because it will be hard to get \(p < .05\). Low power is bad because when you do get \(p < .05\) with low power, it is likely that:

- The effect size will be largely overestimated! (Type M error).

- The effect will be in the wrong direction! (Type S error).

So, I think people care about power for the wrong reasons 🤷 Some related blog posts/manuscripts by Andrew Gelman:

Appendix: Power For F-tests, Correlation, and Regression

Power for Correlation

Power for correaltion coefficients is fairly streightforward to compute.

- If we expect to see \(r =.3\), what sample size do we need to have \(80\%\) power at \(\alpha = .05\)?

Power in Regression

In regression, We calculate power based on \(R^2\). More specifically, we use Cohen’s \(f^2\). there are two slight variations on \(f^2\):

When you have a single set of predictors and you want to calculate power for a regression,

\[ f^2 = \frac{R^2}{1 - R^2} \]

When you want to calculate power for \(\Delta R^2\) (see this), whether a set of predictors B provides a significant improvement over an original set of predictors A,

\[ f^2 = \frac{R^2_{AB} - R^2_{A}}{1 - R^2_{AB}} \]

Where \(R^2_{AB} - R^2_{A} = \Delta R^2\), the improvement of adding the set of predictors B.

Larger \(R^2\) value imply larger \(f^2\) values. Some guidelines are that \(f^2\) of .02, .15, and .35 can be interpreted as small, medium, and large effect sizes respectively. Why do we use \(f^2\)? Check out the this if you are curios!

Power For \(R^2\)

u stands for \(\mathrm{df_1}\) and v stands for the \(\mathrm{df_2}\) of the \(F\)-distribution. We know from Lab 4 that \(\mathrm{df_2} = n - \mathrm{df_1} - 1\). Thus,

\[ n = \mathrm{df_2} + 1 + \mathrm{df_1} = 61.18 + 1 + 3 = 65.18 \]

Power For \(\Delta R^2\)

So, if we want to have \(80\%\) power for \(\Delta R^2 = .05\), we now need at least \(131\) participants. We know this because

\[ n = 125.5 + 4 + 1 = 130.5 \]

I already linked this in the first slide, but this page has very detailed explanations on how to use all the functions from the pwr package.

References

PSYC 6802 - Lab 5: Power