Lab 10: Multiple Regression II

PSYC 6802 - Introduction to Psychology Statistics

Today’s Packages and Data 🤗

Today we will look at some data about US states. I chose this subset of variables from a larger dataset that you can find here.

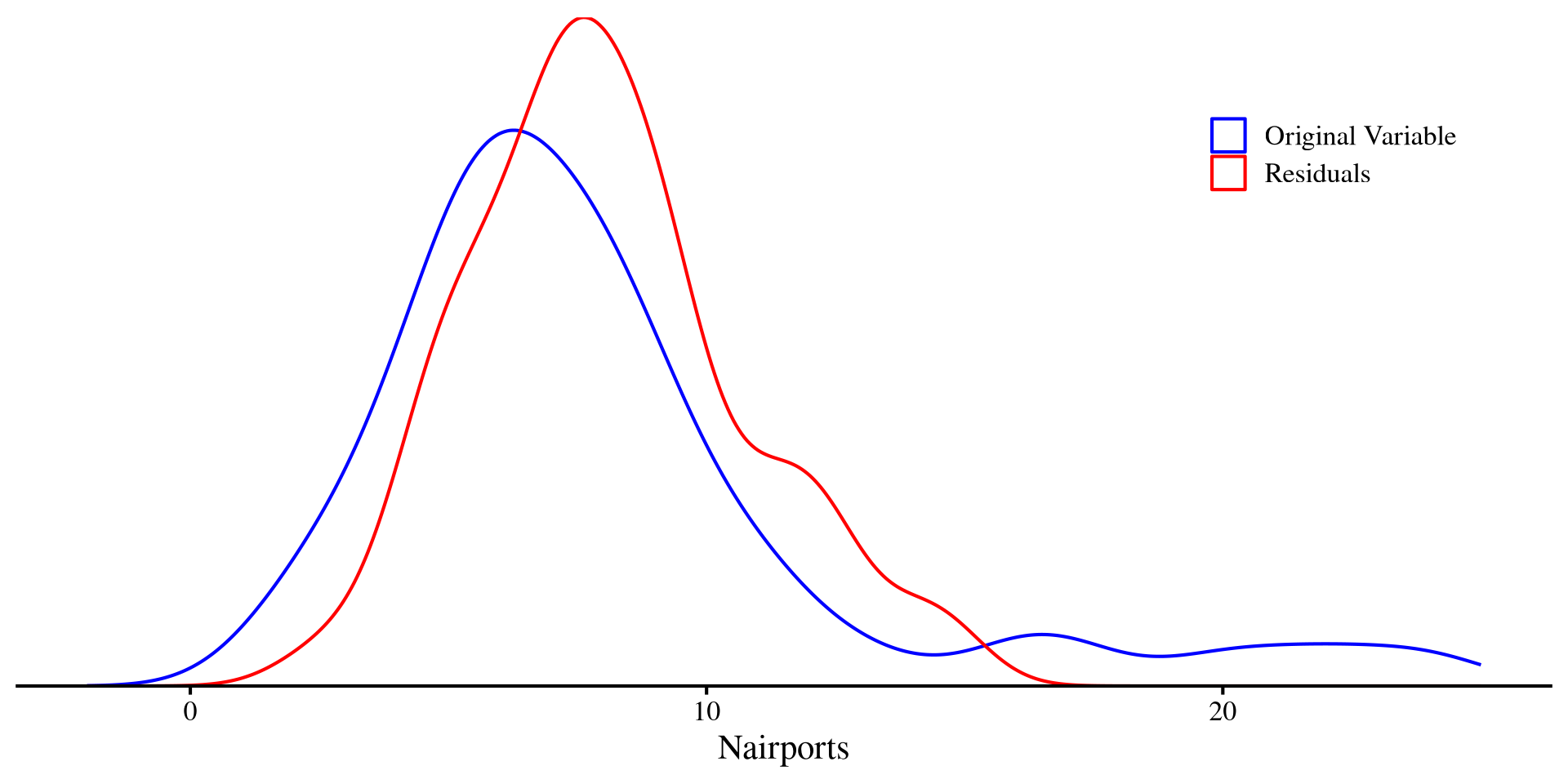

Another Perspective on Residuals

in Lab 6 we have described variance as the part of a variable that is not predictable. Let’s say that we run a regression with the state population, Population, predicting the number of airports in the state, Nairports.

Plot code

ggplot(dat, aes(x = Nairports, color = "Raw")) +

geom_density() +

geom_density(aes(x = reg_pop$residuals + mean(dat$Nairports), color = "residuals"))+

xlim(c(-2, 25)) +

scale_y_continuous(expand = c(0,0)) +

scale_color_manual(

values = c("Raw" = "blue",

"residuals" = "red"),

name = "",

labels = c(

"Raw" = "Original Variable",

"residuals" = "Residuals")) +

theme(axis.title.y = element_blank(),

axis.text.y = element_blank(),

axis.ticks.y = element_blank(),

axis.line.y = element_blank(),

legend.position = c(0.95, 0.95),

legend.justification = c("right", "top"))

In a way, the residuals are the unpredictable part of a DV left after regression. That is, everything that cannot be explained by the IV.

Correlations With Residuals

If \(Y\) is our DV and \(X\) is our IV, the residuals of \(Y\) given \(X\) will be the part of \(Y\) that cannot be predicted by \(X\), which we will call \(Y_{.x}\). Let’s say that \(Y =\) Nairports, \(X =\) Population, and \(Z =\) TotalSqMiles.

Partial correlation

The partial correlation between two variables \(Y\) and \(Z\) accounting for \(X\) is the correlation between \(Y_{.x}\) and \(Z_{.x}\).

This is the relation between Nairports and TotalSqMiles after partialling out the part that is predicted by population from both variables.

Semi-Partial correlation

The semi-partial correlation between two variables \(Y\) and \(Z\) accounting for \(X\) is the correlation between \(Y\) and \(Z_{.x}\) or \(Y_{.x}\) and \(Z\). Because you partial out \(X\) from only one of the two variables, we have two possible semi-partial correlations!

Some More intuition

The partial and semi-partial correlation may seem to come a bit out of nowhere, but their idea is the exact same as multiple regression: we want to know the relation between two variables, after controlling for some other variables. We can see that the standardized slopes will be very close to the semi-partial correlation:

# standardize our X, Y, Z variables

dat$Nairports_std <- scale(dat$Nairports)[,1]

dat$Population_std <- scale(dat$Population)[,1]

dat$TotalSqMiles_std <- scale(dat$TotalSqMiles)[,1]

reg_std <- lm(Nairports_std ~ Population_std + TotalSqMiles_std , dat)

# here I am printing the intercept and slopes

round(coef(reg_std), 3) (Intercept) Population_std TotalSqMiles_std

0.000 0.637 0.581 Where the semi-partial correlation and the standardized regression coefficient of TotalSqMiles are very similar, but not quite the same (see the 3rd post here).

In contrast, the partial correlation is just the correlation between two residuals. One good use of partial correlation is calculating the correlation between two variables, after accounting for some Confounding variable.

Semi-partial Correlation as Effect Size

Semi-partial correlations are also quite useful because they happen to be measures of effect size 😃

Let’s say that we run a regression with Population predicting Nairports and look at the \(R^2\):

Now, if we look at the semi-partial correlation between Nairports and the residuals of TotalSqMiles after accounting for Population:

The ppcor Package

Calculating these correlations by hand is good to understand what is happening, but in practice you would use the functions from the ppcor package:

Partial correlations

Nairports TotalSqMiles Population

Nairports 1.0000000 0.8218767 0.8452136

TotalSqMiles 0.8218767 1.0000000 -0.6552065

Population 0.8452136 -0.6552065 1.0000000Here you get the correlation between any two variables, accounting for all other variables in the correlation matrix.

Semi-Partial correlations

Nairports TotalSqMiles Population

Nairports 1.0000000 0.5765179 0.6319791

TotalSqMiles 0.8149457 1.0000000 -0.4899079

Population 0.8380858 -0.4596045 1.0000000Here, the correlation matrix is not symmetric because, two semi-partial correlations are possible. The variable that is untouched is the variable in the row, while the variable in the column is adjusted for the remaining variables.

You can extract additional information such as significance and tests statistics just as we did in Lab 6 with the corTest() function.

Pesky Data Points

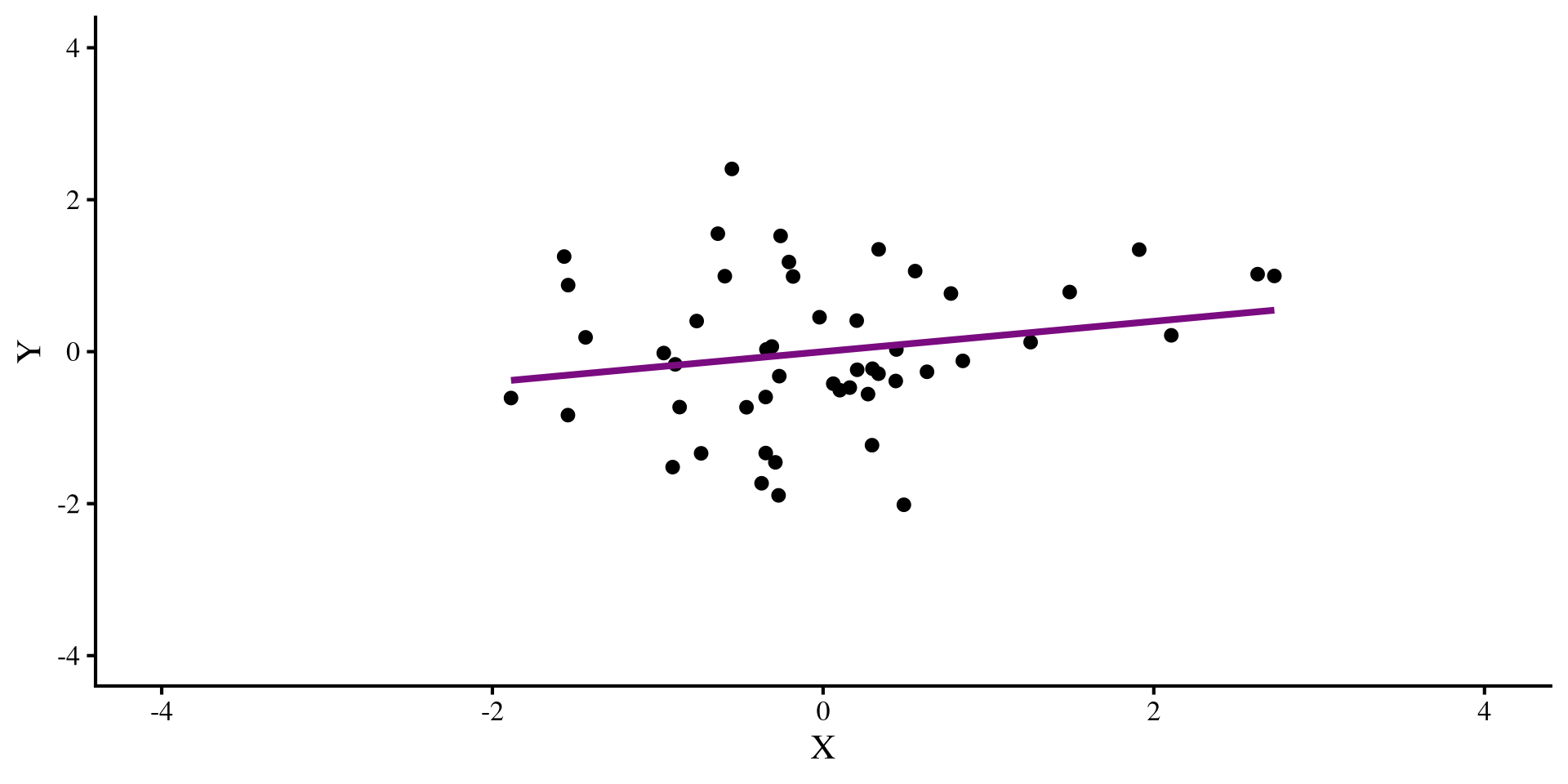

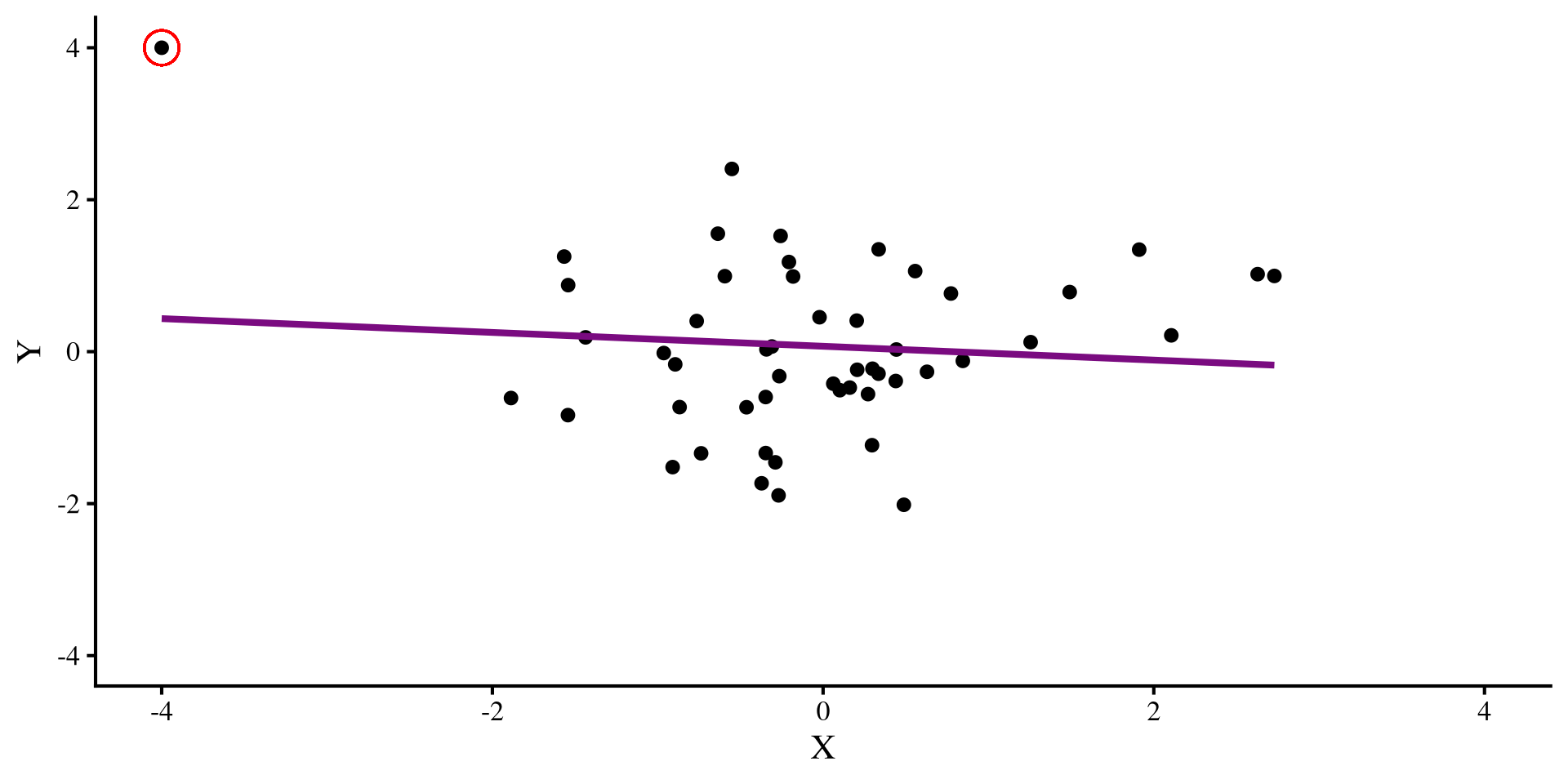

Let’s say that we have this regression, where we see a positive relation between X and Y:

But then we find out that we forgot to include an observation. We include the new observation:

The datapoint that we added is an outlier, and it influences the regression so much that it flips the relation between X and Y 😱 Ideally, we would want to catch such pesky data points, although in the real world, it is usually not possible to eye regression results and tell which observations have this oversized influence on our regression.

Regression Diagnostics

When we are working with a lot of data and/or we have a lot of predictors, it is not possible to graphically establish what points may warrant more attention. Regression diagnostics are a set of statistics that aim at identifying data points that may influence your regression in certain ways. There are 3 types of regression diagnostics. You may see slightly different definitions for these 3 terms. I am getting these definitions/examples from chapter 16 of Darlington & Hayes (2016):

Leverage

Distance

Influence

Quick Regression Diagnostics Summary

- Hat values: measure how unusual an observation is compared to the average observation. (leverage)

- Studentized Residuals: For each data point, it measures how large the residual is on a standardized scale (distance).

- DFFITS: For each data point, it measures how large the change in the predictions, \(\hat{Y}\), would be if that data point was removed (influence).

- Cook’s D: For each data point, it measures how large the change in the predictions, \(\hat{Y}\), would be if that data point was removed. Very similar to DFFITS (influence).

- COVRATIO: For each data point, it measures how large the average change in all the standard errors of the regression coefficients would be if that data point was removed (influence).

- DFBETAS: For each data point, it measures how much each individuals regression coefficient would change if that data point was removed (influence).

) is my favorite measure because for each data point, it tells you how much each regression coefficient will change if that data point is removed. Other measures give some average, which, for most purposes, is not as informative in my opinion.

) is my favorite measure because for each data point, it tells you how much each regression coefficient will change if that data point is removed. Other measures give some average, which, for most purposes, is not as informative in my opinion.

Our Regression and a Quick Graphical Look

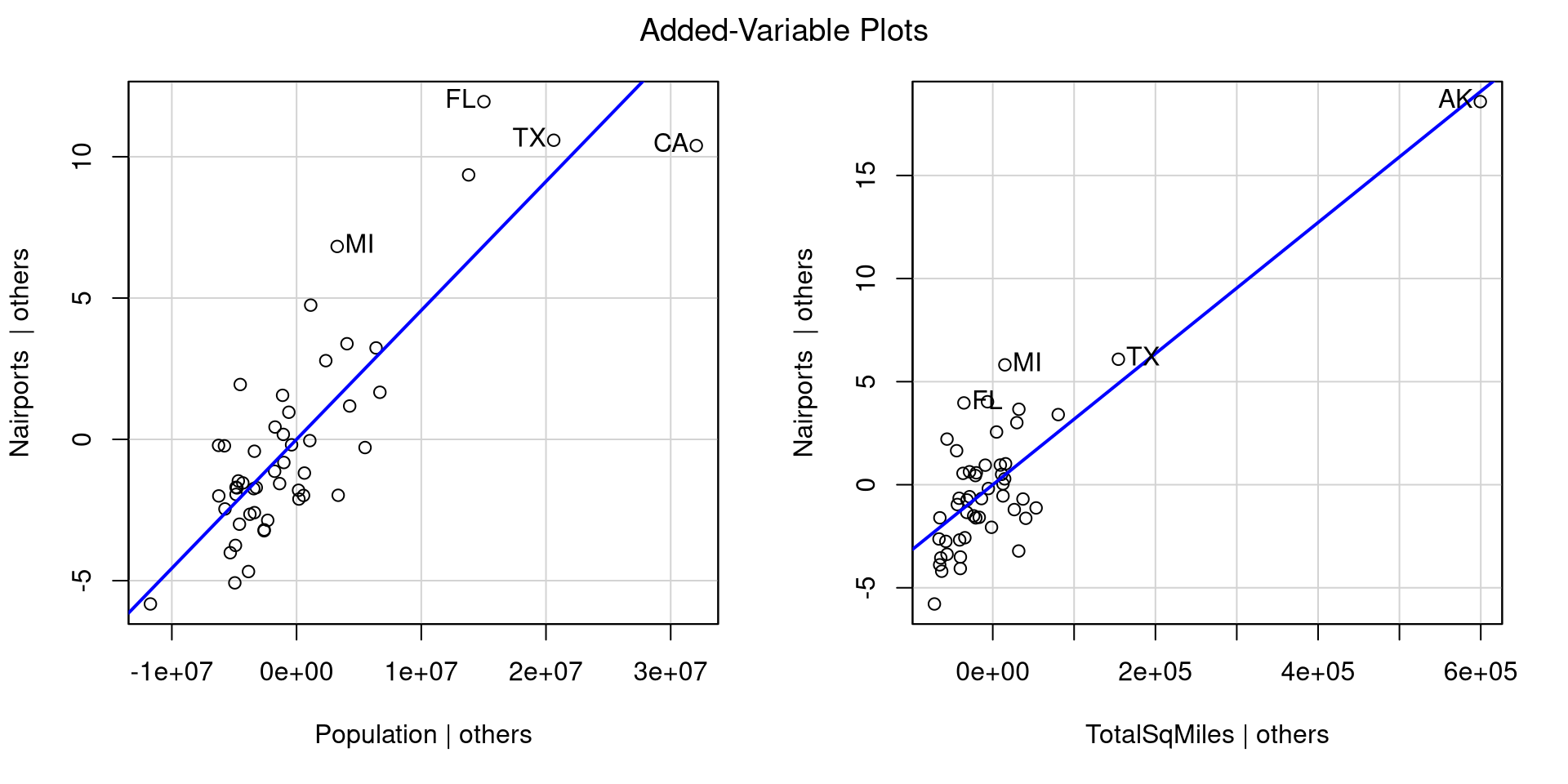

Let’s say that we want to predict Nairports based on Population and TotalSqMiles. We can run the regression and look at the added variable plots to get sense of how our regression looks:

For each plot, the avPlots() function prints the two points with the largest residual and the 2 points with the largest leverage. Although, they are based on individual plots, not the full data.

We can also see that our regression assumptions are probably violated, especially in the second plot, where Alaska is very far away from all the other points.

Leverage: Hat Values

Hat values measure how unusual an observation is compared to the average observation. They are a measure of leverage, and the higher the values, the more unusual the observation given the set of predictors (the lowest possible value is \(0\)).

To compute hat values for all your observations we use the hatvalues() function. Here I only print the 5 largest values:

Here are all the hat values:

Leverage is just a way of determining whether an observation is unusual, but it does not necessarily mean that the observation is problematic. I generally don’t like guidelines of “how big is too big” for regression diagnostics because they don’t generalize well to real world cases. Here, Alaska’s hat value is around twice as large as the second largest one, so somewhat big relative to all other data points.

Distance: Studentized Residuals

Studentized Residuals measure how large each residual is on a standardized scale (a t scale really, but makes no difference in practice). This is a measure of distance from the regression line/plane.

To compute studentized residuals for all your observations you use the rstudent() function. Here I only print the 5 largest and smallest values:

Here are all the residuals:

We are talking about residuals, so they can be both positive (above the regression line) and negative (below the regression line). Because the residuals are on a standardize scale, around 0 is an average residual, where anything with an absolute value of 2 or more is somewhat high. So california, Washington, Florida and Michigan are not very well predicted.

Influence: DFFITS

DFFITS measure how large the change in the predictions, \(\hat{Y}\), would be on average if that data point was removed.

To compute DFFITS for all your observations you use the dffits() function. Here I only print the 5 largest and smallest values:

Here are all the residuals:

Predictions can change either positively or negatively, so we should look at extreme values both ways. You can think of DFFITS as a measure that summarizes how much the whole regression model changes when a data point is removed. Here, we can see that the DFFITS value for California is more than twice as large as the next largest DFFITS value!

Influence: Cook’s D

Cook’s D is very similar to DFFITS because it also summarizes the change in the predictions, \(\hat{Y}\), given any data point if that data point was removed (influence). The difference is that Cook’s D is always positive, and large values imply larger influence.

Here are all the values:

Data points with large DFFITS (in magnitude) will have large Cook’s D, so the interpretation is similar; you can check that the largest absolute values on the previous slides all appear here. As mentioned above, the one notable difference is that Cook’s distance is always positive, so may be easier to interpret from some.

Influence: COVRATIO

COVRATIO measures how large the average change in all the standard errors of the regression coefficients would be if that data point was removed.

To compute COVRATIO values for all your observations you use the covratio() function. Here I only print the 5 largest and smallest values

MI FL WA HI NJ MT RI CA TX AK

0.72 0.78 0.84 0.88 0.92 1.11 1.11 1.18 1.40 5.83 The interpretation of these values is a bit more nuanced than the other regression diagnostics 🤔

Here are all the values:

You may notice that COVRATIO values hover around 1. In fact, \(\mathrm{COVRATIO} = 1\) means that removing the point has no impact whatsoever on the standard errors. On the other hand, \(\mathrm{COVRATIO} < 1\) means that removing the point will make standard errors smaller, while \(\mathrm{COVRATIO} > 1\) means that removing the point will make standard errors larger.

Influence: DFBETAS

DFBETAS represent the change in standard error units (the std.error column in the regression summary) in the intercept and slopes if if that data point was removed.

DFBETAS provide more information than the other measures. Removing a data point may have stronger influence on one of the slopes, but not as much influence on another slope or the intercept 🧐

You can click on the column names in the table on the left to order the table by each column and see the more extreme values for each column.

The change is in standard deviation units, so, for example, removing California reduces the slope of Population by \(2.23\) standard errors (a lot!), but only reduces the slope of TotalSqMiles by \(.17\). Other regression diagnostics do not provide this nuance!

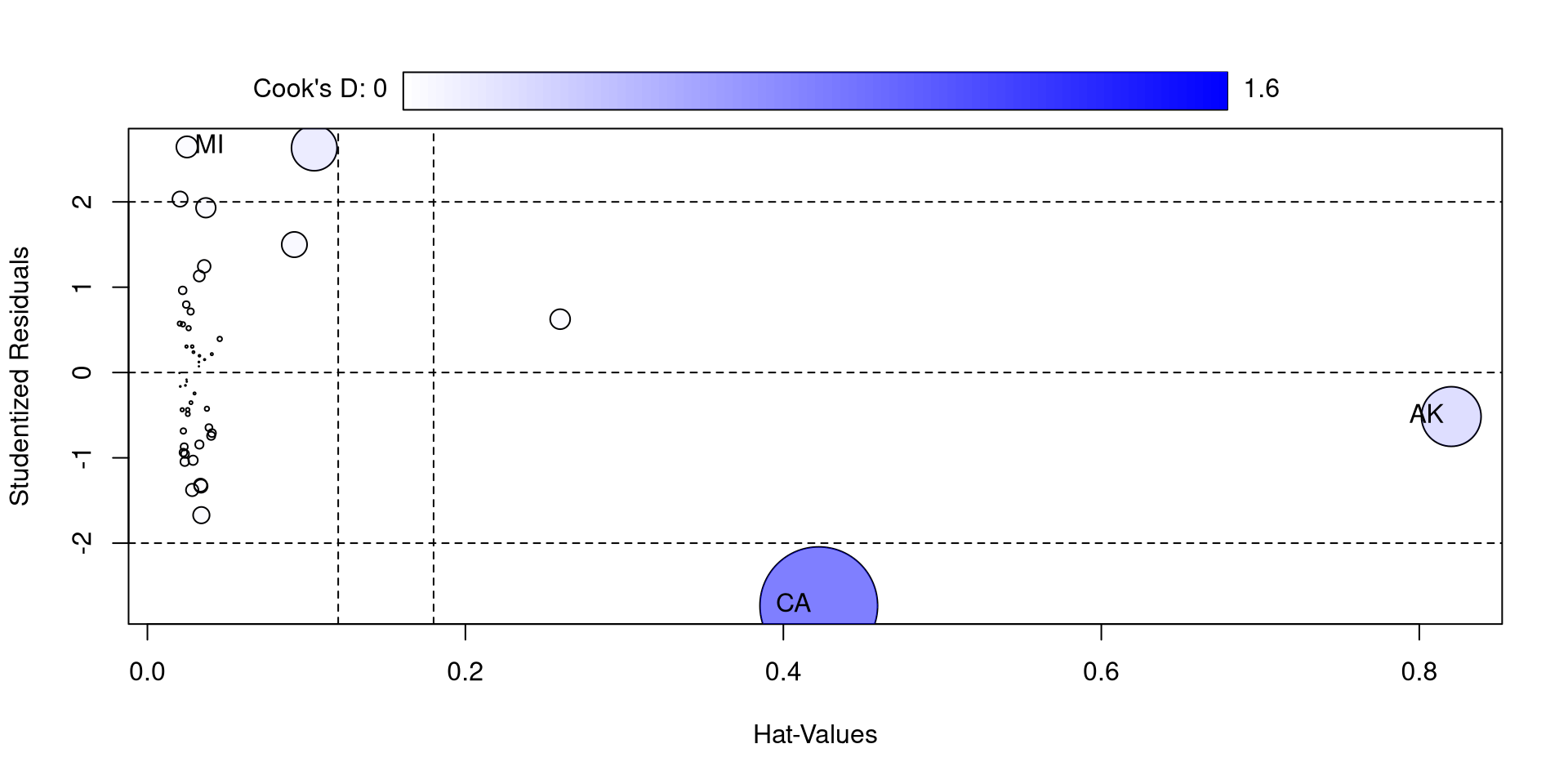

Influence plot with car

The influencePlot() function from the car package also offers a nice visualization for studentized residuals, hat values, and Cook’s D at the same time

So, compared to the other states, California and Alaska are quite high on some of these measures. If you think about it, they are both have “unusual” compared to other state in the California has by far the largest population and Alaska has by far the largest surface.

I also feel like the function is not very aptly named because only Cook’s D is a measure of influence 🫣

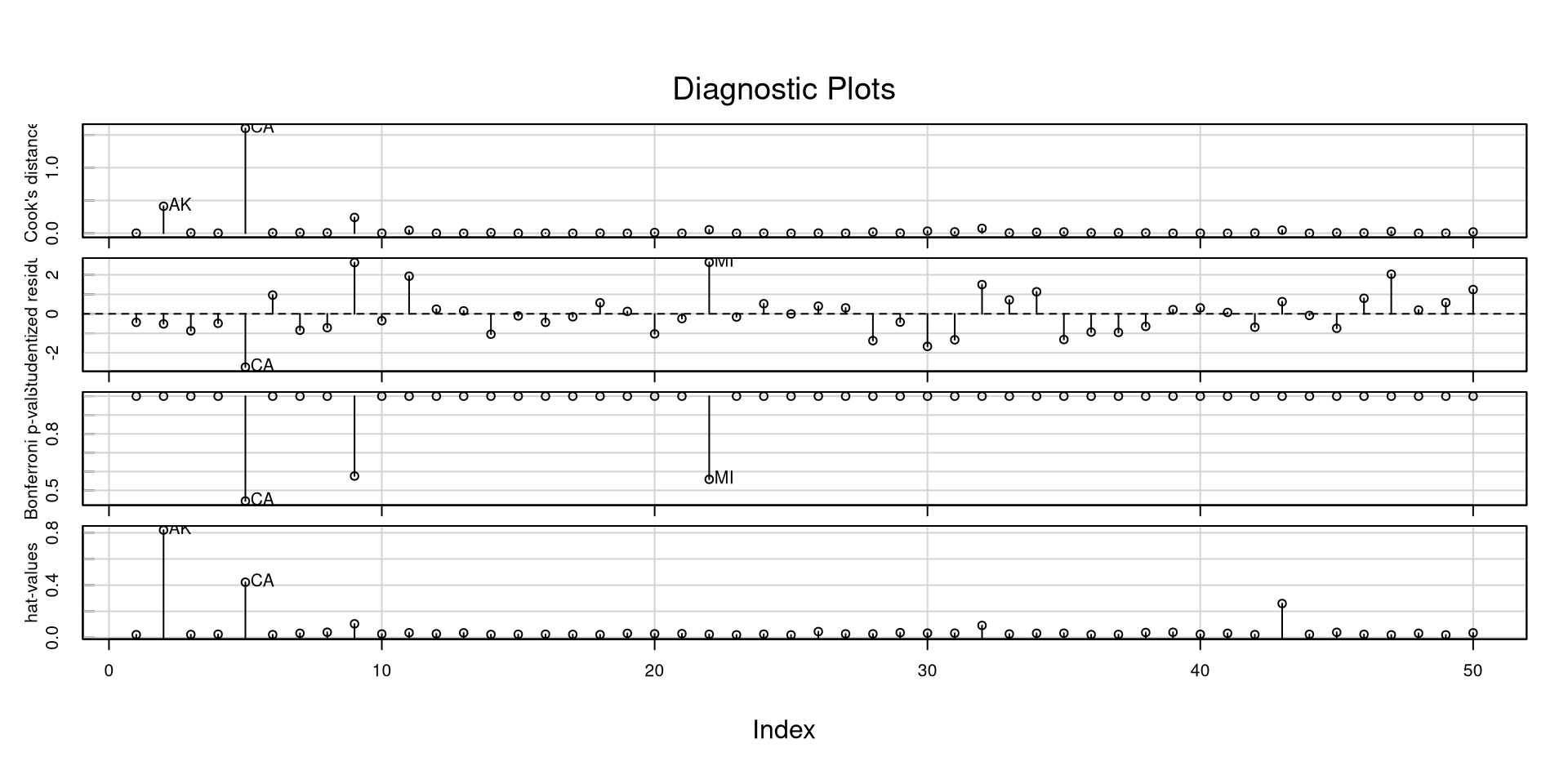

Another Neat car Plot

The influenceIndexPlot() function offers a visualization of a bunch of regression diagnostics. This helps visualizing what observations are extreme relative to other observations

In general, and especially with these measures, I like to look at how large they are relative to all other observations. If you have a data point that is 3 times as large as everything else, it probably warrants some attention.

Variance Inflation Factor

Another important statistic that may as well be a regression diagnostic is the variance inflation factor (VIF), which can be calculated for all regression predictors. For a predictor \(X\),

\[ VIF_x = \frac{1}{1 - R^2}\]

where the \(R^2\) represents the variance explained in the predictor \(X\) by all other predictors. The higher the correlation between predictors, the higher VIFs are going to be. VIFs above 10 generally means that some of your predictors are very highly correlated

we can use the vif() function from the car package to calculate the VIFs for all of our predictors.

VIFs close to 1 mean that there isn’t much correlation between the predictors. High VIF is not desirable because (1) it inflates standard errors of regression coefficients, and (2) it usually makes regression coefficients uninterpretable. Let’s see an example…

Multiple Regression Weirdness

We want to predict percentage of practicing catholics in each state, PctCatholic, based on voting patterns in the 2020 elections.

we have two variables in our data, PctBiden2020 and PctTrump2020, which are the percentages of Biden and Trump voters in each state in 2020. Let’s look at the regression slopes with the two predictors separately and then together:

(Intercept) PctBiden2020

-3.7982684 0.4773207 Because we have learned that multiple regression is better then running separate regressions, let’s run a regression with both of our predictors:

(Intercept) PctBiden2020 PctTrump2020

-76.8087255 1.2189242 0.7505901 …Do you notice anything strange about the regression with both predictors? 🤔

Multicollinearity and Regression Weirdness

In the previous slide we found that the higher percentage of Biden voters was associated with a higher percentage of catholics, whereas the relation was reversed in the case of percentage of Trump voters. However, when we ran a multiple regression, both regression slopes were positive (a bit spooky if you ask me 😱). What is happening?

Well, because of the 2 party system in the US, PctBiden2020 and PctTrump2020 are almost perfectly negatively correlated:

Unsurprisingly, the VIFs are off the charts:

A case of highly correlated predictors is generally referred to as multicollinearity. In such cases, regression slopes are not to be trusted. Another clue that something is not right comes from comparing the \(R^2\) from the individual and multiple regressions:

[1] 0.3195397[1] 0.3125517[1] 0.3236817Where all the \(R^2\) values are almost identical. This means that in practice PctBiden2020 and PctTrump2020 are the same variable!

A Graphical representation

To provide a sense what we are asking our regression to do when we have some highly correlated predictors, let’s look at the 3D plot:

If you move the plot around, you will notice that there is no depth to the cloud of points. That is, we are missing a dimension!

This should really be a 2D plot. Once again, this happens because PctBiden2020 and PctTrump2020 are the same variable and we just have a 2D scatterplot, not a 3D one 🙃

You can imagine how the math may break down when we ask for a regression plane for this sort of data.

All we need is a line, and asking for a plane forces the plane to contort in really strange ways to fit the 2D data 🧐 This is why the regression slopes get really funky in these cases.

References

PSYC 6802 - Lab 10: Multiple Regression II