Lab 14: Regression Diagnostics

PSYC 7804 - Regression with Lab

Spring 2025

Today’s Packages and Data 🤗

No new packages for today!

We will use the 2024 world happiness report data, which we have already used in Lab 4:

Let’s also name the rows with the country names. This helps later when we need to identify problematic data points:

Regression Diagnostics

Regressions diagnostics are an umbrella term for methods that help you identify individual data points that may have an undue influence on the regression results. More often than not, these data points would be considered outliers (although, see info box below).

Before going over the many regression diagnostics that exist, I want to show how sample size is an important consideration when evaluating how concerned you should be about these regression diagnostics.

When should you be careful? In general, outliers influence results more than other data points. Thus, when you have a small sample size (I would say \(N < 100\)?), you want to be extra careful about interpreting your results if some regression diagnostics are off.

What is an outlier?

Determining whether a data point is an outlier is very subjective. Statistical methods may help you identify potential outliers, but you 🫵 have to ultimately decide what to do with those data points. Given what you know about your variables, would you considered those data points outliers? Do you want to remove them? If yes, why remove them? If not, why keep them? Statistics can point you in a direction, but you have to decide what to do and justify it.

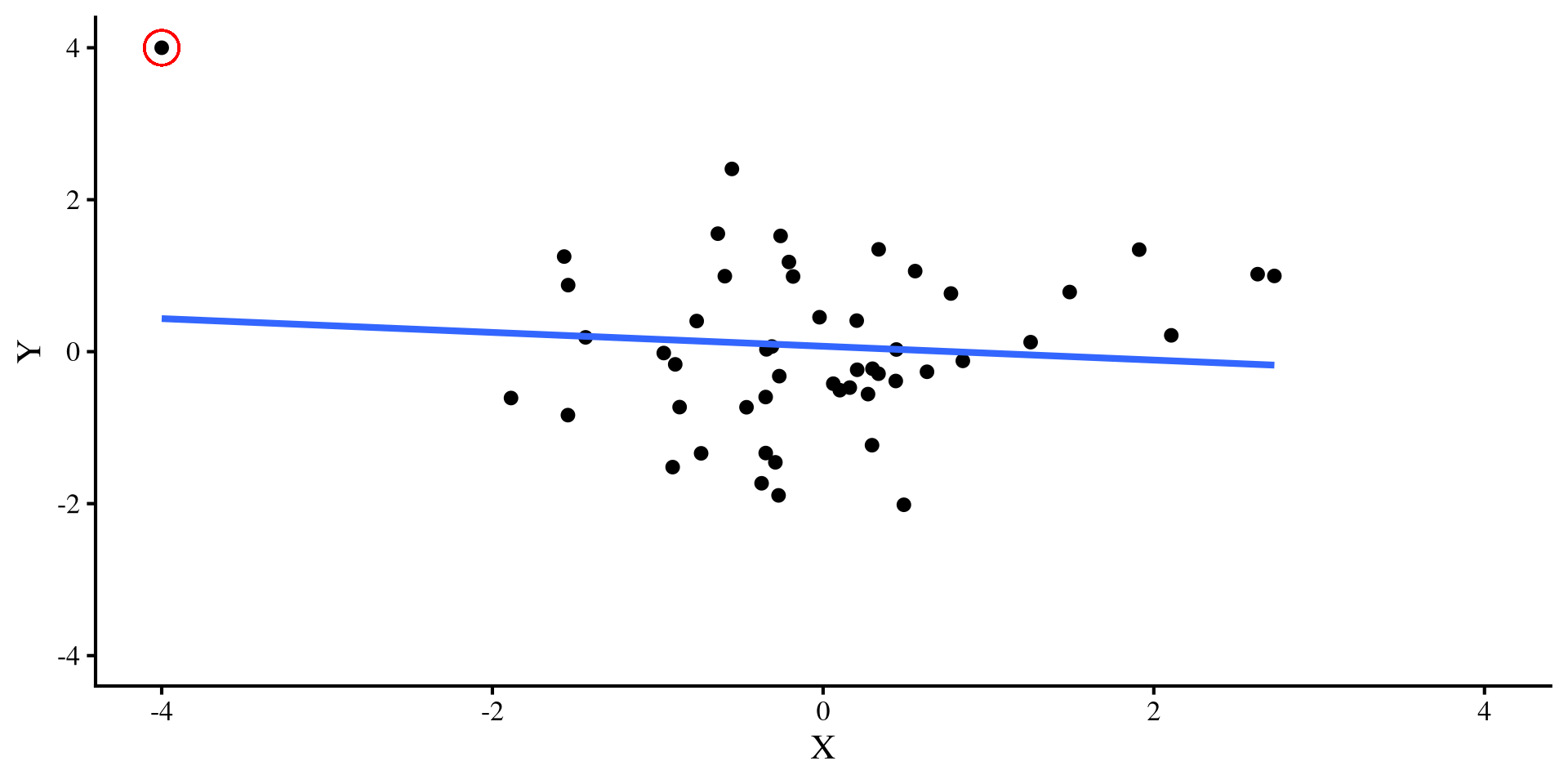

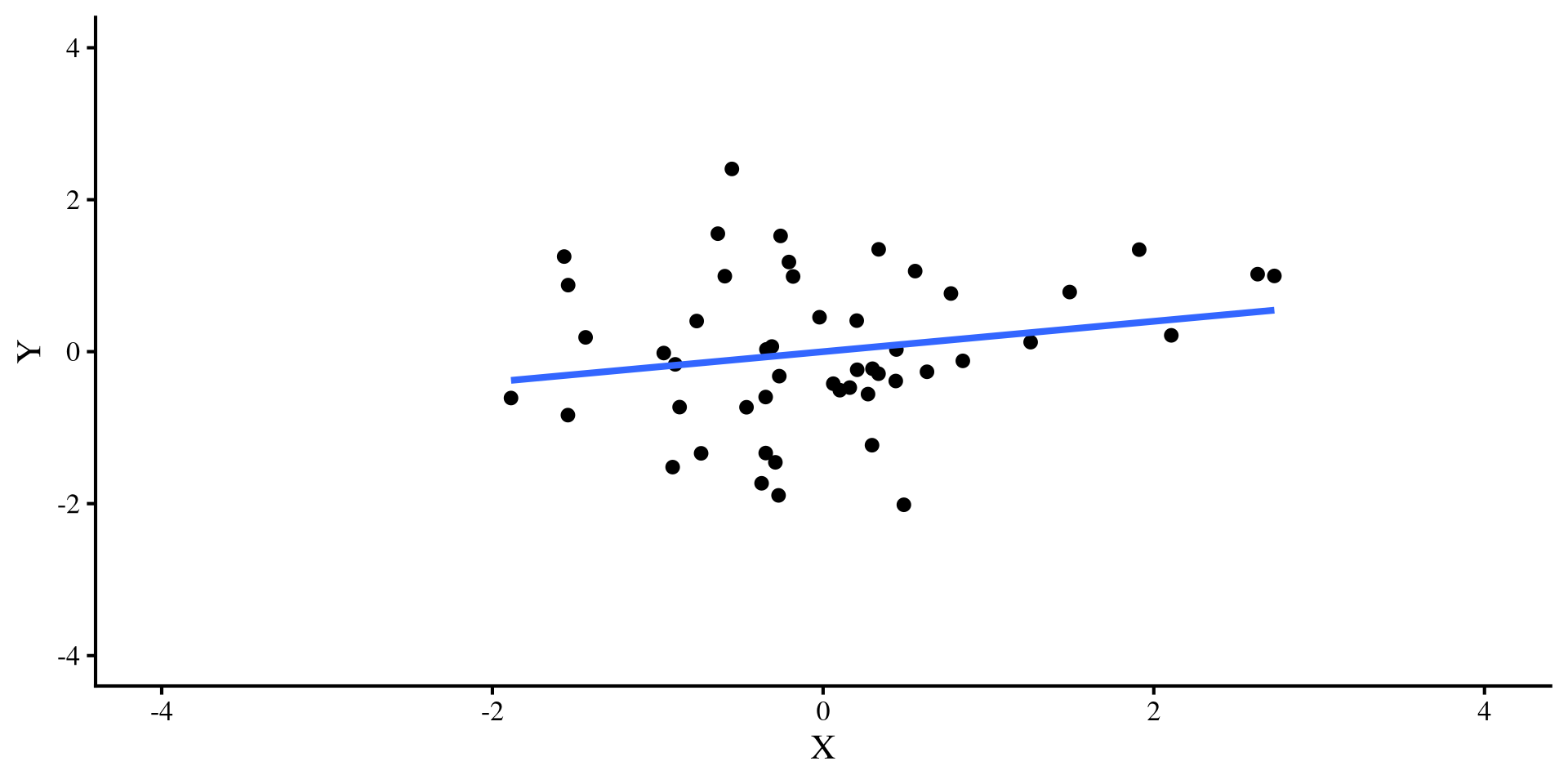

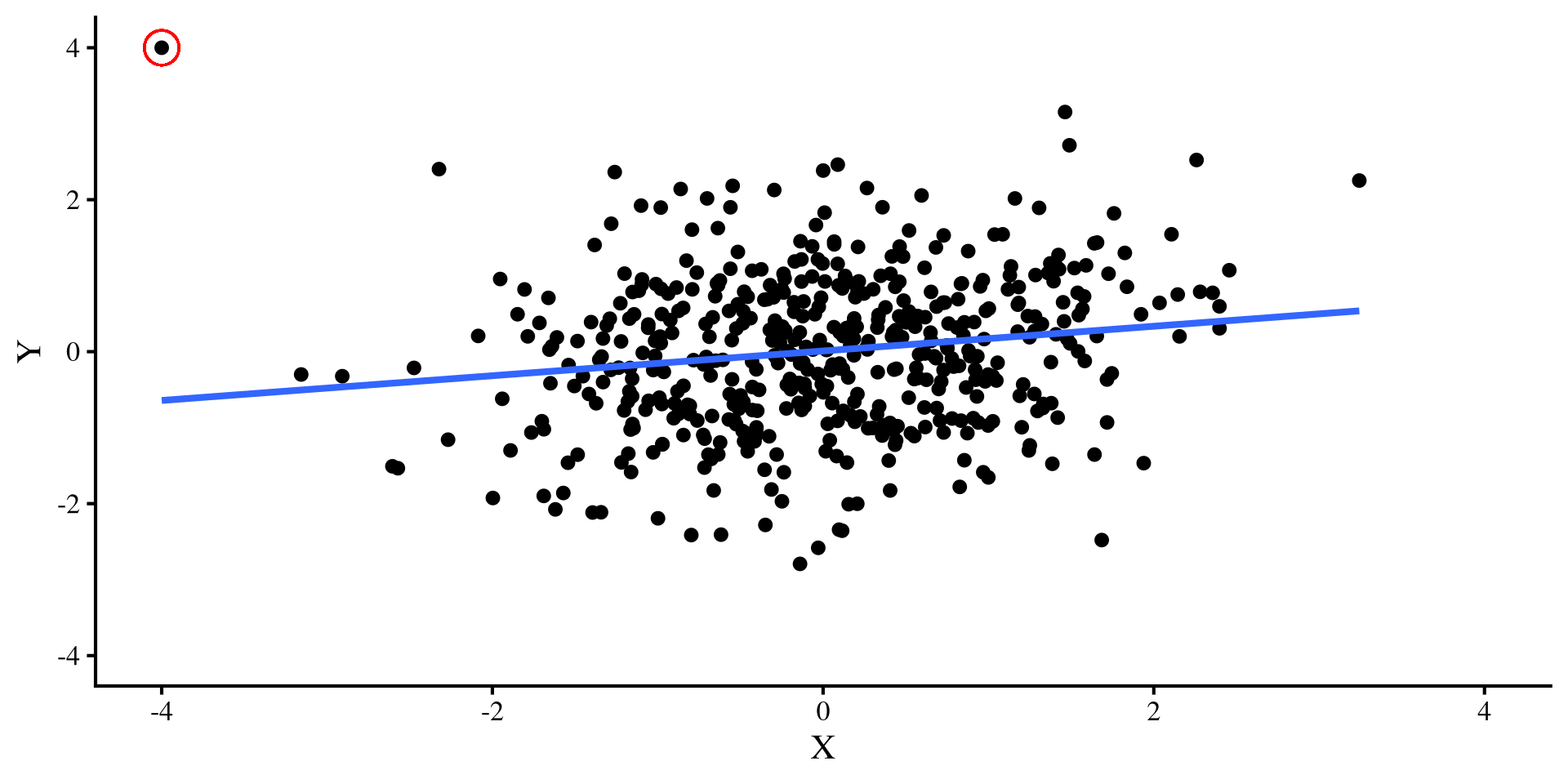

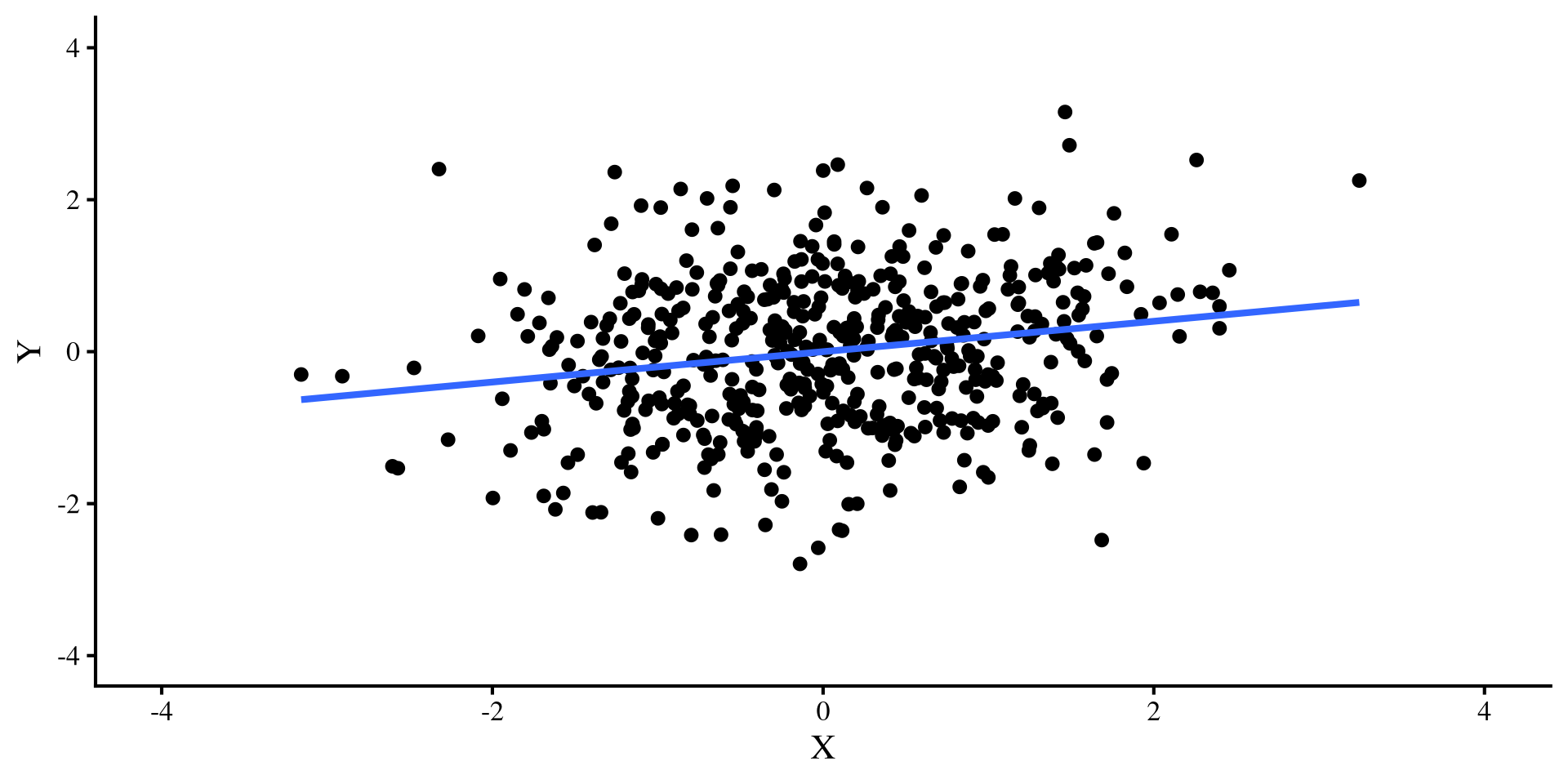

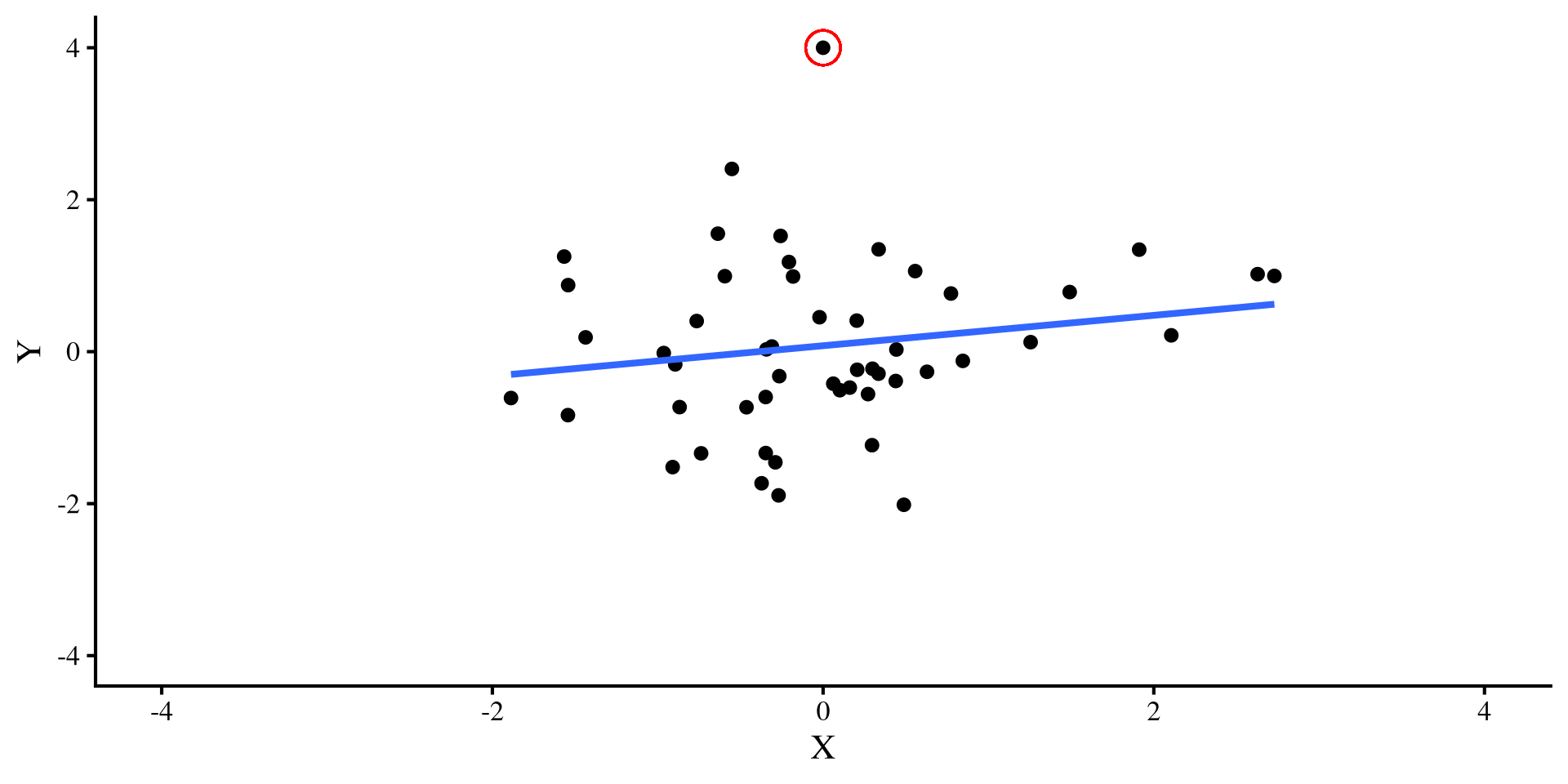

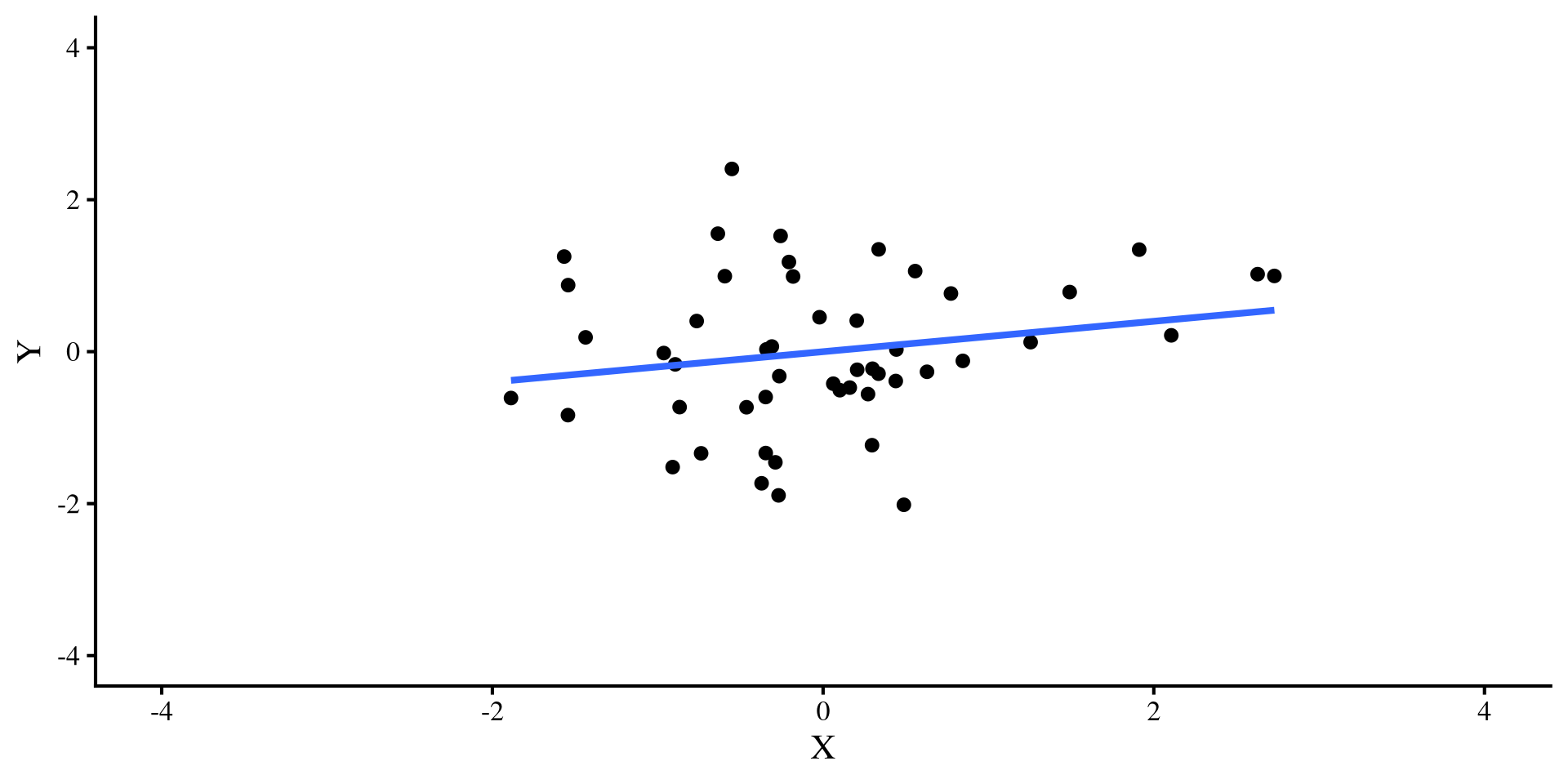

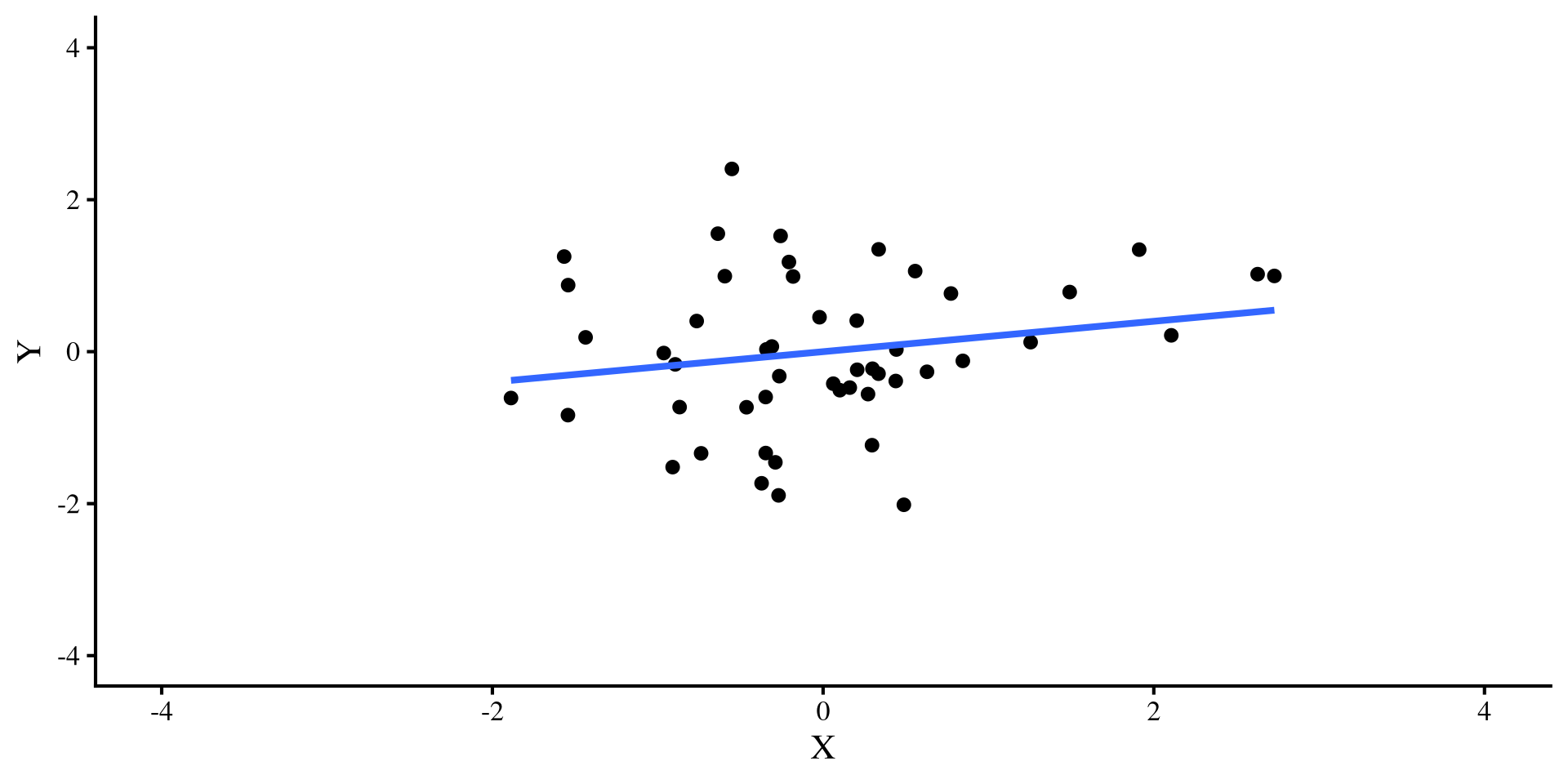

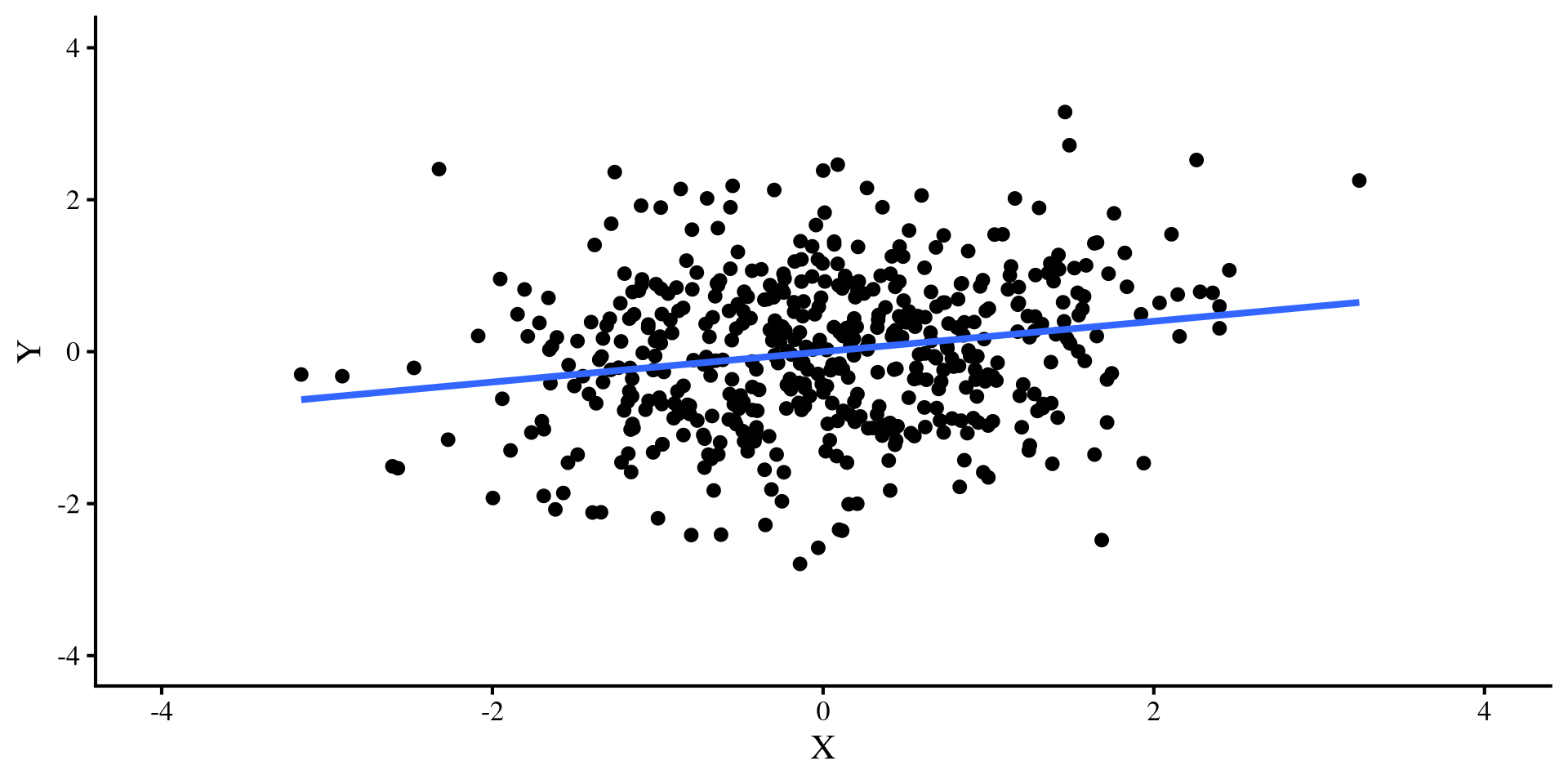

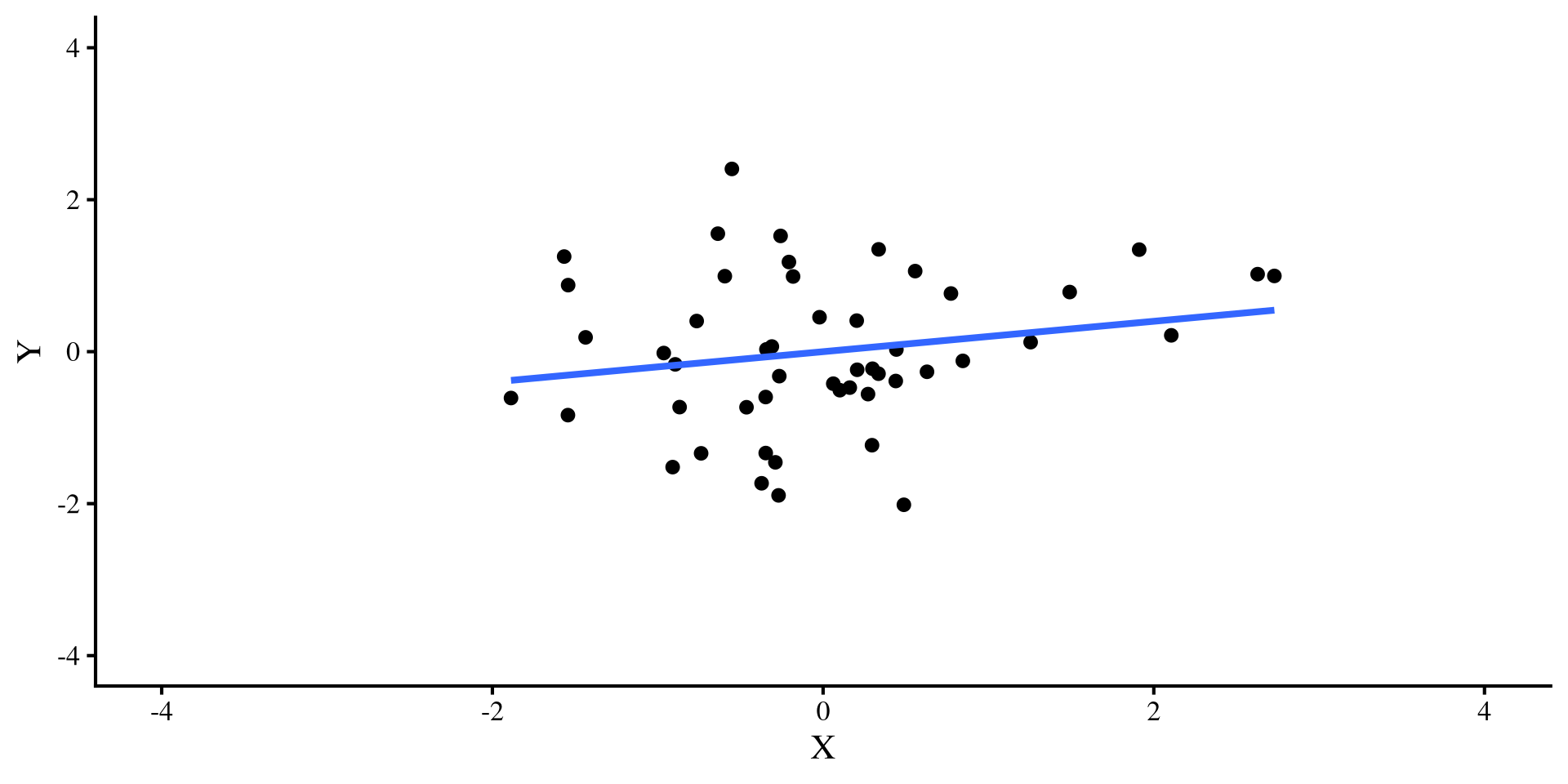

Simulating some Data

Let’s say that I simulate some \(Y\) and \(X\) variables that have a correlation of \(r = .2\). I do this for both a sample size of \(N = 50\) and \(N = 500\) and plot the regression lines:

simulate data

set.seed(28567)

cor_mat <- rbind(c(1, .2),

c(.2, 1))

sim_dat_50 <- data.frame(MASS::mvrnorm(n = 50, c(0, 0), Sigma = cor_mat, empirical = TRUE))

colnames(sim_dat_50) <- c("Y", "X")

sim_dat_500 <- data.frame(MASS::mvrnorm(n = 500, c(0, 0), Sigma = cor_mat, empirical = TRUE))

colnames(sim_dat_500) <- c("Y", "X")

The regression line is the exact same for both plots, bet let’s see what happens once we add a pesky outlier…

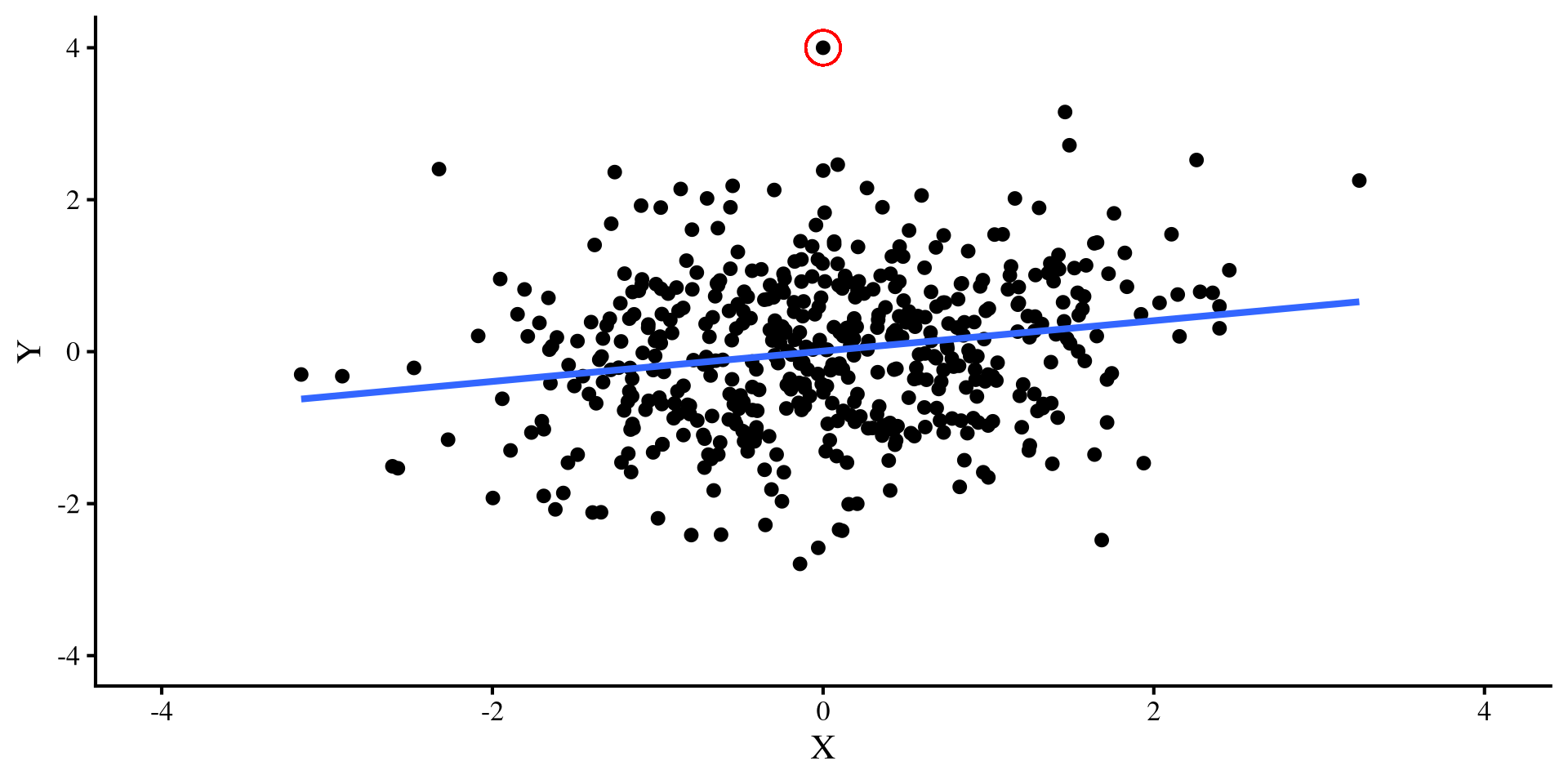

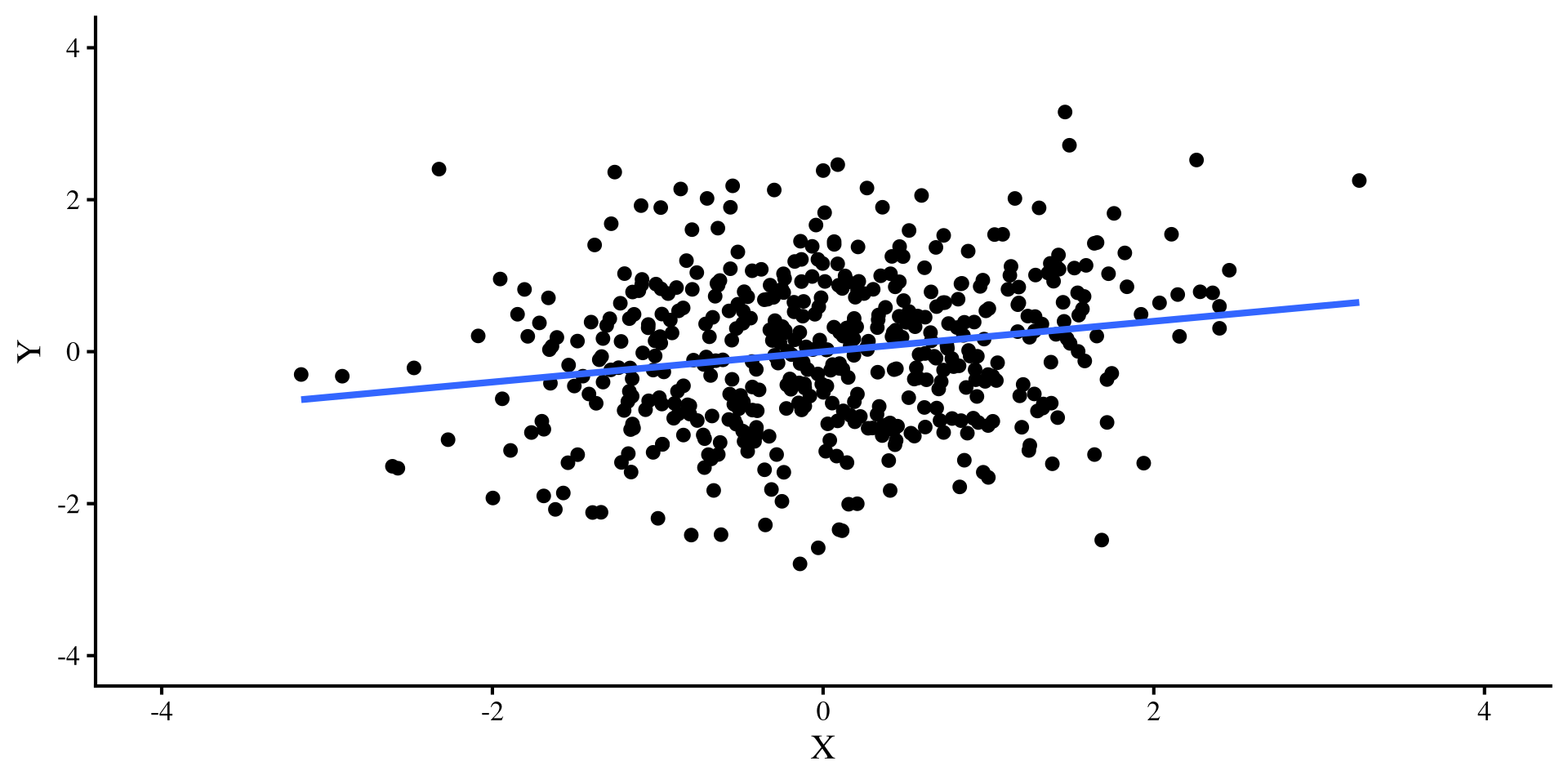

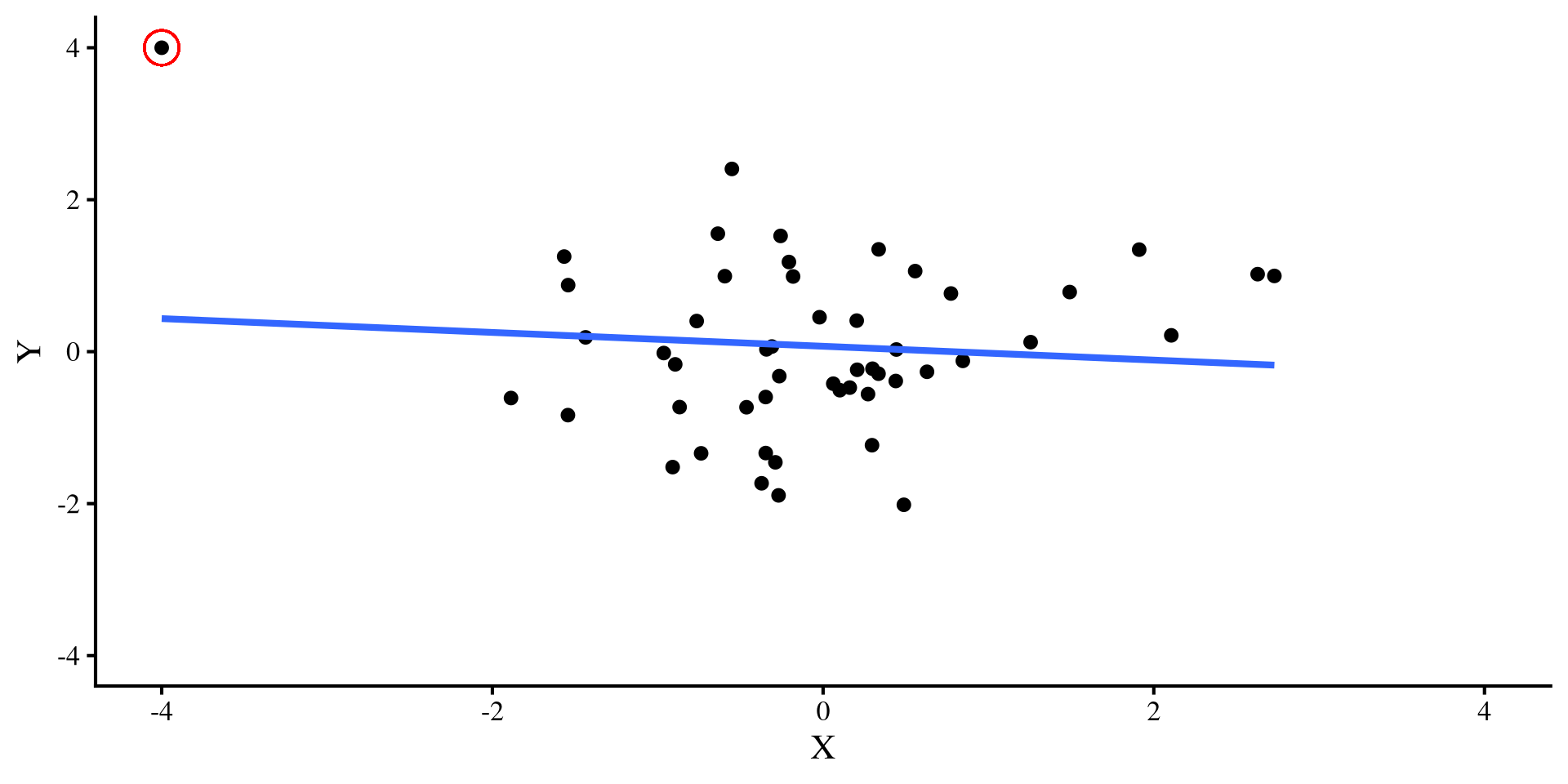

Adding a Single Outlier

A single outlier flips the relation between \(X\) and \(Y\) when \(N = 50\) 😱 but it doesn’t do much when \(N = 500\) 😇

Moral of the story?

So, what’s the moral of the story?

Small sample sizes: In small sample sizes, you should always check regression diagnostics, and carefully evaluate how extreme points may be influencing your results. If leaving or removing a single extreme point changes your results significantly, I would not have much faith in the robustness of the results.

Large sample sizes: The larger the sample size, the less influential extreme points will be. you still want to check residual plots, but you (usually) don’t need to be as concerned about the impact that extreme points may have on your results (assuming you don’t have that many extreme points).

But wait! Not All Outliers Ruin your fun

Let’s try a different outlier, that has values of \(Y = 4\) and \(X = 0\). Check out what happens to the two regression lines now:

Ah, this outlier doesn’t do much to either regression 🤔 Actually, the only thing it does is slightly change the intercept, which is not a big deal usually.

Back to Regression Diagnostics

As we saw from the previous examples, there are outliers that are more or less “dangerous”. Regression diagnostics give us different information (some more useful than other) about our data points. There are 3 general categories of regression diagnostics:

Leverage

Distance

Influence

You may see slightly different definitions for these 3 terms. I am drawing my definitions/examples from chapter 16 of Darlington & Hayes (2016).

Quick Regression Diagnostics Summary

- Hat values: measure how unusual an observation is compared to the average observation. (leverage)

- Studentized Residuals: For each data point, it measures how large the residual is on a standardized scale (distance).

- DFFITS: For each data point, it measures how large the change in the predictions, \(\hat{Y}\), would be if that data point was removed (influence).

- Cook’s D: This is very similar to DFFITS, because it also measures how large the change in the predictions, \(\hat{Y}\), would be if a data point was removed (influence).

- COVRATIO: For each data point, it measures how large the average change in all the standard errors of the regression coefficients would be if that data point was removed (influence).

- DFBETAS: For each data point, it measures how much each individuals regression coefficient will change if that data point would be if that data point was removed (influence).

) is my favorite measure because for each data point, it tells you how much each regression coefficient will change if that data point is removed. Other measures give some average, which, for most purposes, is not as informative in my opinion.

) is my favorite measure because for each data point, it tells you how much each regression coefficient will change if that data point is removed. Other measures give some average, which, for most purposes, is not as informative in my opinion.

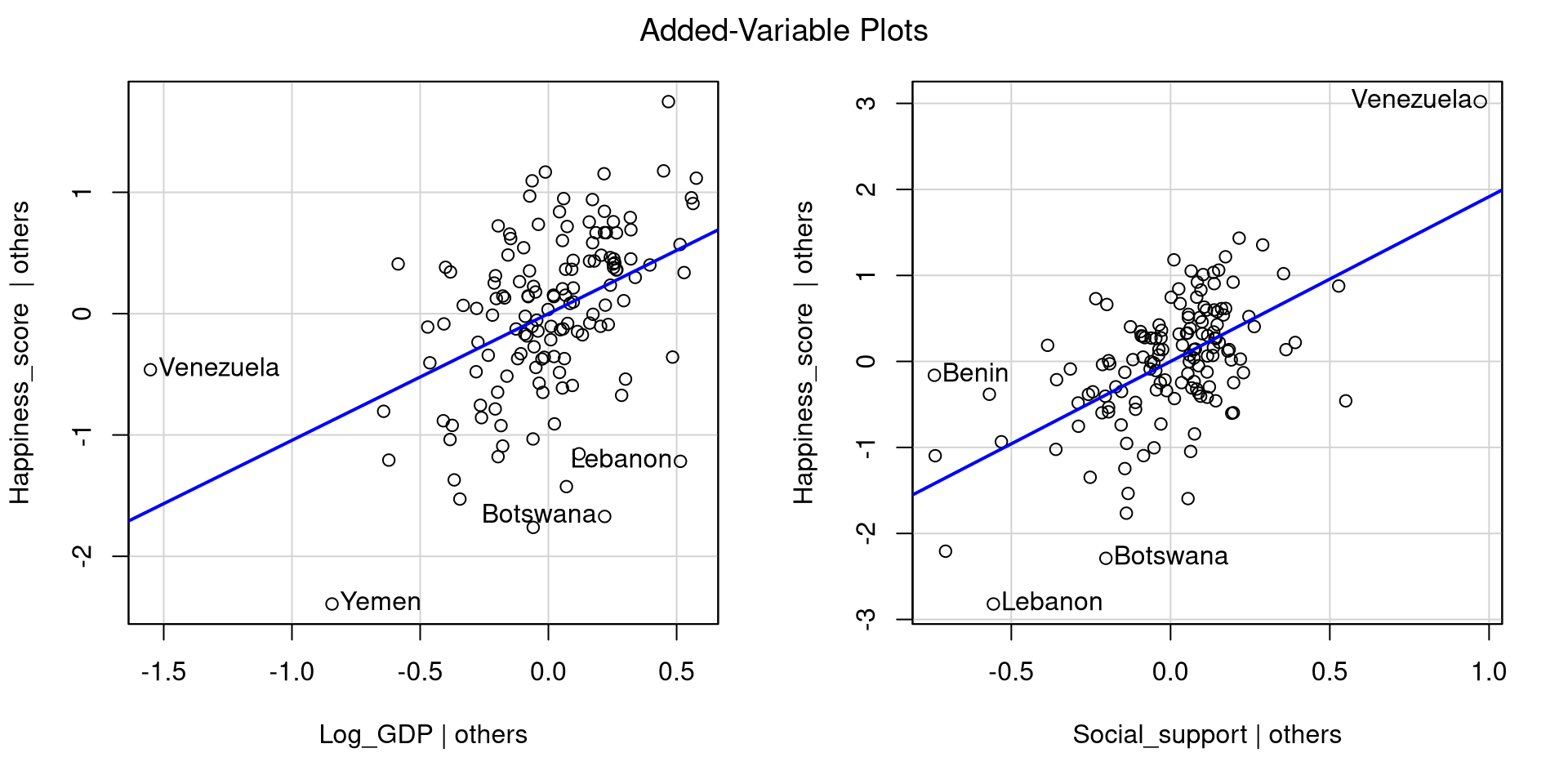

Our model

Let’s say that we want to look at how log_GDP and Social_support predict Happiness_score for each country. Let’s look at the added variables plots directly:

avPlots() function also identifies the 2 points with the largest residuals and the largest leverage (for the single plot).

Hat values

Hat values measure how unusual an observation is compared to the average observation. They measure leverage, and the higher the values, the more unusual the observation given the set of predictors.

Here are all the hat values:

Leverage is just a way of determining whether an observation is unusual, but it does not necessarily mean that the observation is problematic. I generally don’t like guidelines of “how big is too big” for regression diagnostics because they don’t generalize well to real world cases. Here, Venezuela’s hat value is more than twice as large as the second largest one, so somewhat big relative to all other data points.

Studentized Residuals

Studentized Residuals measure how large each residual is on a standardized scale (a t scale really, but makes no difference in practice). This is a measure of distance.

To compute hat values for all your observations you use the rstudent() function. Here I only print the 3 largest and smallest values

Here are all the residuals:

We are talking about residuals, so they can be both positive (above the regression line) and negative (below the regression line). Because the residuals are on a standardize scale, around 0 is an average residual, where anything above 2 (2 standard deviations above the mean) is somewhat high.

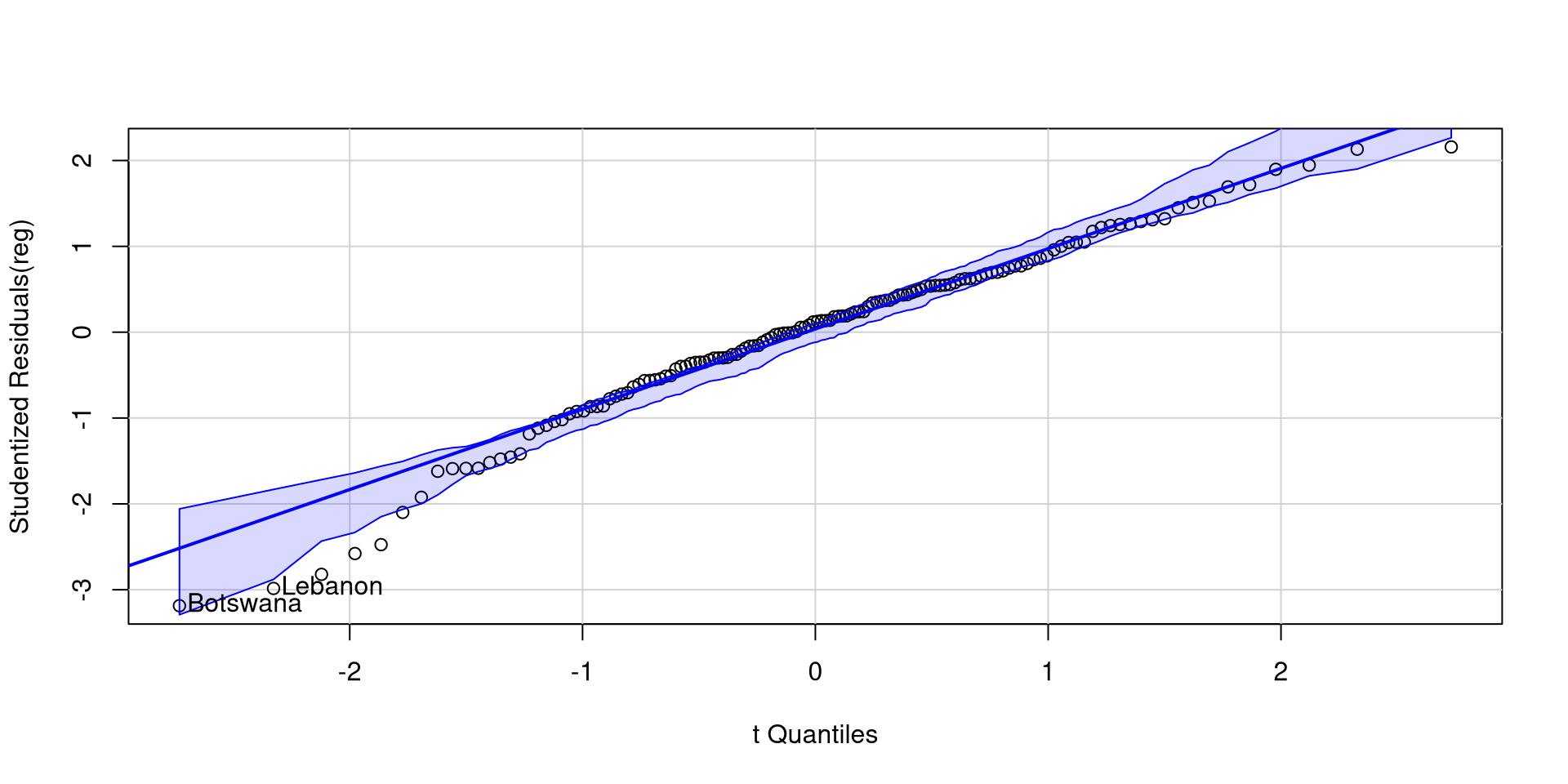

Studentized Residuals? QQplot them

Studentized residuals are just the values of the standardized residuals. Some large residuals are expected and are usually not that big of a deal. The car package has a quick function to create a QQplot of studentized residuals

DFFITS

DFFITS measure how large the change in the predictions, \(\hat{Y}\), would be on average if that data point was removed (influence).

To compute DFFITS for all your observations you use the dffits() function. Here I only print the 3 largest and smallest values:

Here are all the residuals:

Predictions can change either positively or negatively, so we should look at extreme values both ways. You can think of DFFITS as a measure that summarizes how much the whole regression model changes when a data point is removed.

Cook’s D

Cook’s D is very similar to DFFITS because it also summarizes the change in the predictions, \(\hat{Y}\), given any data point if that data point was removed (influence). The differencer is that Cook’s D is always positive, and large values imply larger influence.

Here are all the values:

Data points with large DFFITS (in magnitude) will have large Cook’s D, so the interpretation is similar. Because Cook’s distance is always positive, it may be easier to interpret from some. You can confirm that the the countries on this slide are the same countries with the largest DFFITS in magnitude on the previous slide.

COVRATIO

COVRATIO measures how large the average change in all the standard errors of the regression coefficients would be if that data point was removed. It’s a measure of influence.

To compute COVRATIO values for all your observations you use the dffits() function. Here I only print the 5 largest and smallest values

Here are all the values:

You may notice that COVRATIO values hover around 1. In fact, \(\mathrm{COVRATIO} = 1\) means that removing the point has no impact whatsoever on the standard errors. On the other hand, \(\mathrm{COVRATIO} < 1\) means that removing the point will make standard errors smaller, while \(\mathrm{COVRATIO} > 1\) means that removing the point will make standard errors larger.

DFBETAS

DFBETAS represent the change in the intercept and slopes if if that data point was removed (influence).

DFBETAS provide more information than the other measures. Removing a data point may have stronger influence on one of the slopes, but not as much influence on another slope or the intercept.

You can click on the column names in the table on the left to order the table by each column and see the more extreme values for each column.

The change is in standard deviation units, so, for example, removing Venezuela changes the slope of Log_GDP by -1.083 standard deviations and that of Social_support by .865 standard deviations. This is a pretty large change.

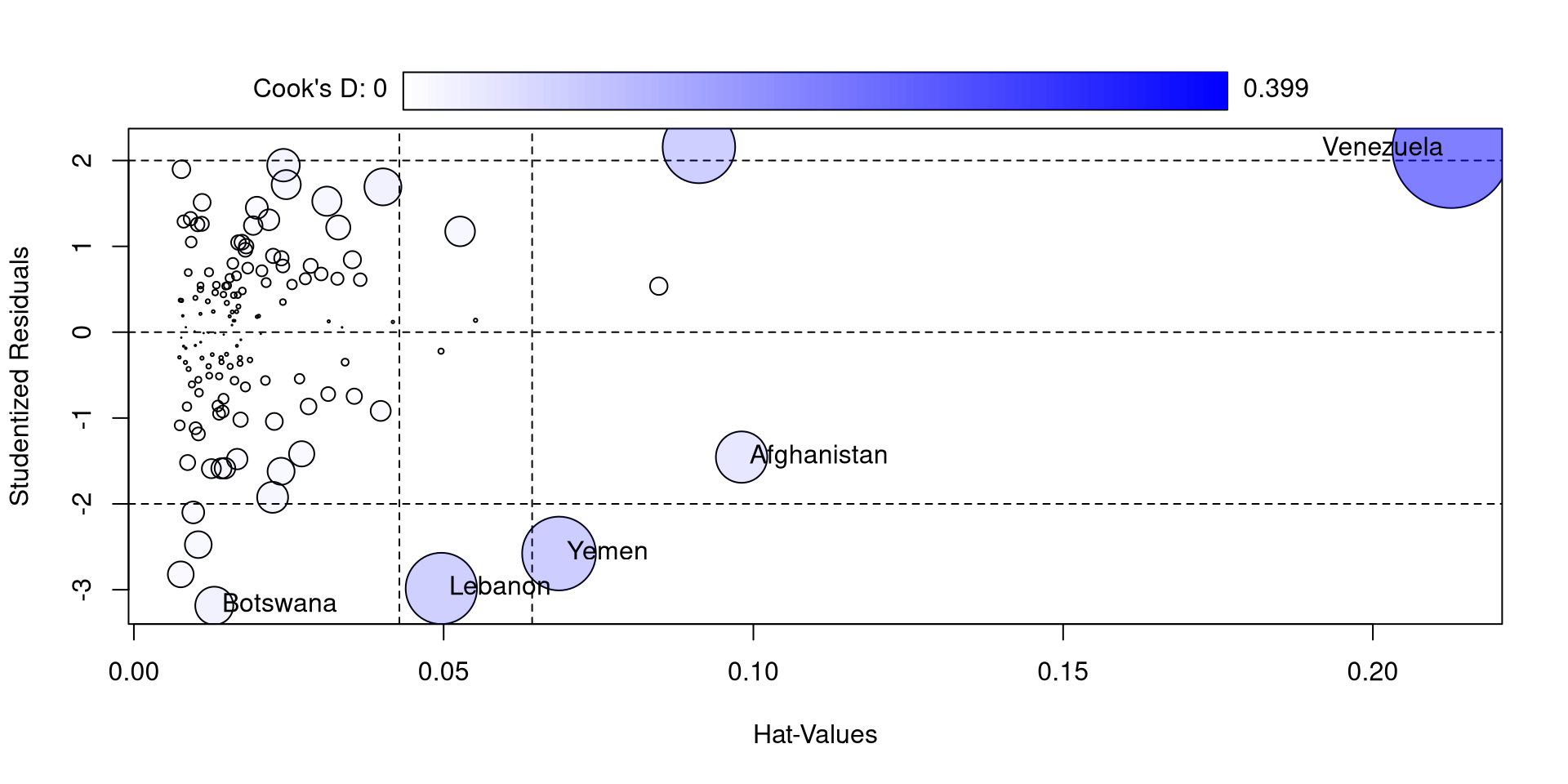

Influence plot with car

The influencePlot function from the car package also offers a nice visualization for studentized residuals, hat values, and Cook’s D at the same time

So, compared to the other countries, Venezuela is really high in all of these measures. If you look at the log_GDP and Happiness_score values for Venezuela you may find something a bit strange (maybe proof that money is not needed for happiness)

I also feel like the function is not very aptly named because only Cook’s D is a measure of influence 🫣

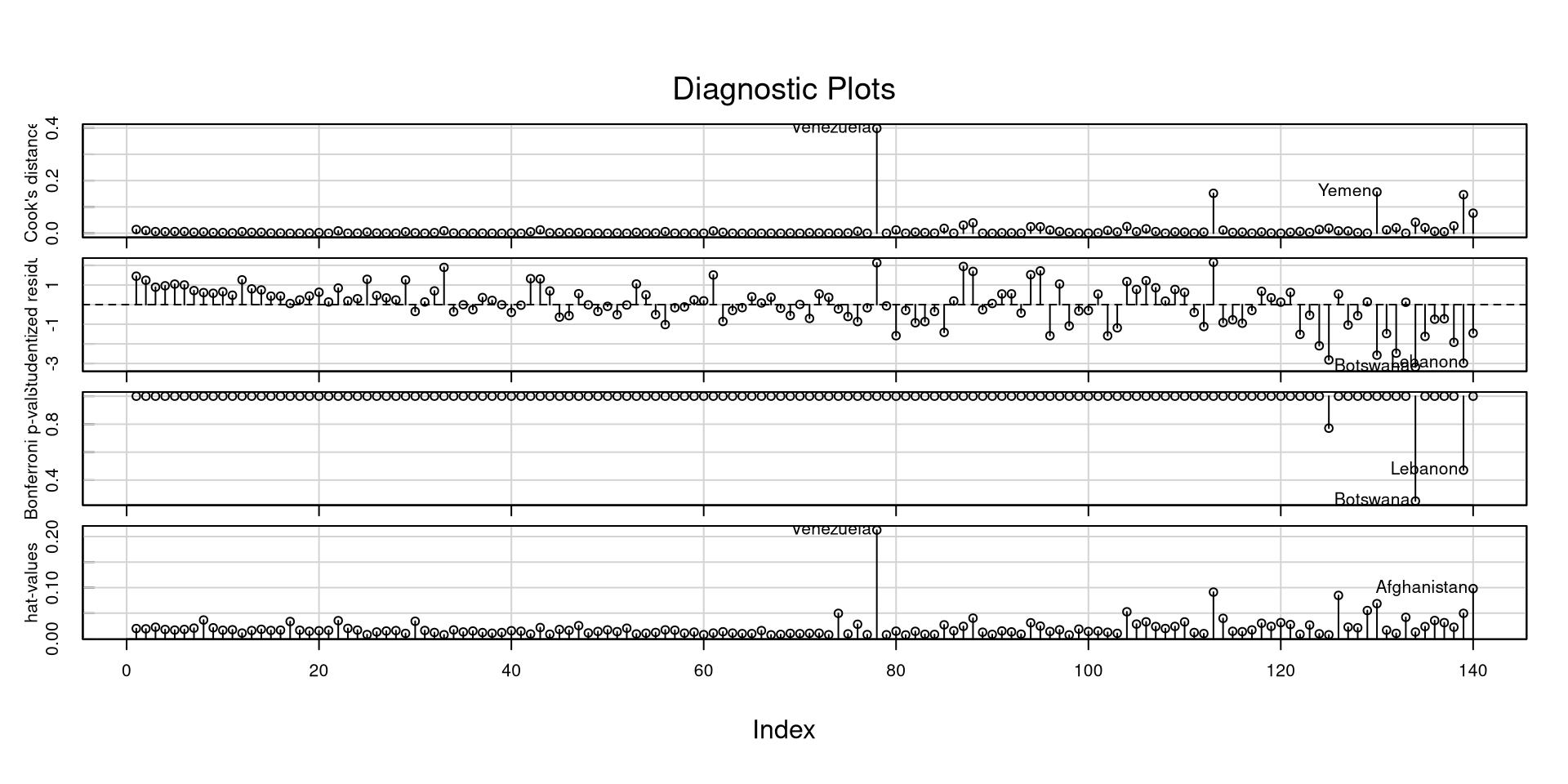

Another Neat car Plot

The influenceIndexPlot function offers a visualization of a bunch of regression diagnostics. This helps visualizing what observations are extreme relative to other observations

With a good deal of these measures, I would not look at “suggested cutoffs”. Following cutoffs blindly (1) leads you to not think about what you are doing, and (2) leads you to make bad decisions and mistakes in many scenarios.

Especially for some of these measures, you want to look at how large they are relative to all other observations.

References

PSYC 7804 - Lab 14: Regression Diagnostics